TL;DR

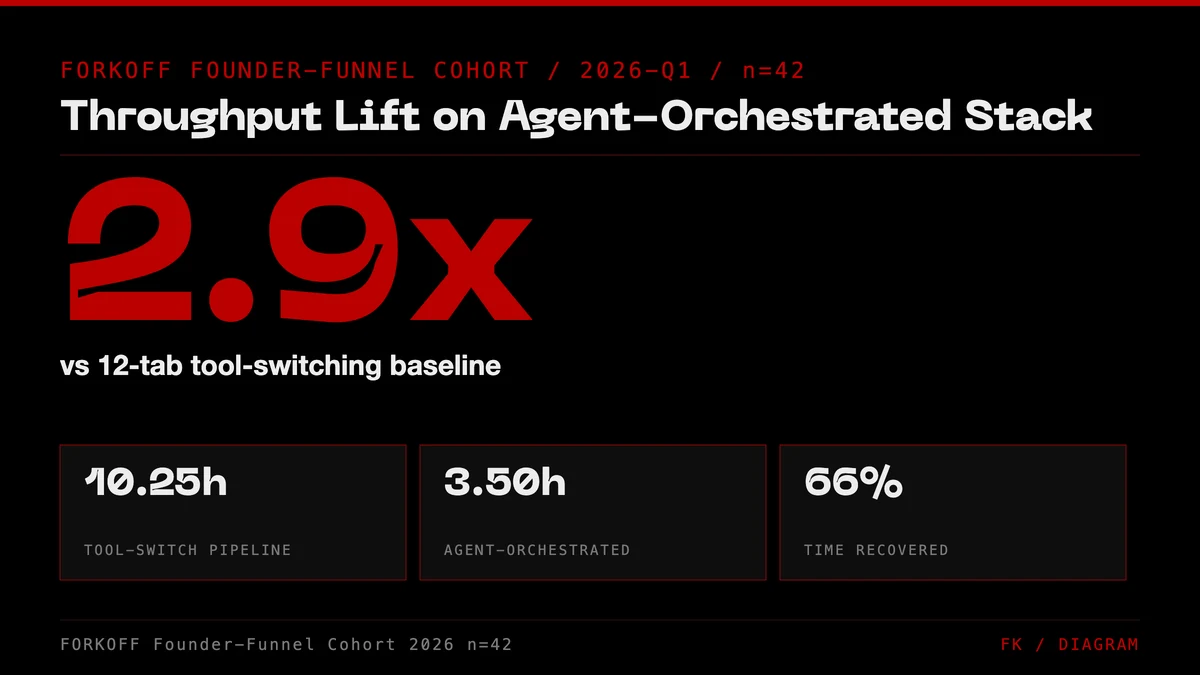

Zed shipped Parallel Agents, OpenAI shipped Workspace Agents in ChatGPT, and Microsoft shipped Teams Agents, all inside the same week of April 2026. The founder marketing stack just changed. The operators compounding in 2026 are running a 4-layer agent-orchestrated stack (spec → orchestrator → tools → verification), not a 12-tab tool-switching loop. Same 7-step blog pipeline ships ~2.9× faster. Here's the stack, the real launch data, and the disqualifiers.

The AGENT-ORCHESTRATED MARKETING STACK

Agentic marketing is the operating model where AI agents are the buyer, the qualifier, and the recommender. The agent-native GTM stack documented below is what FORKOFF runs on every founder engagement in 2026.

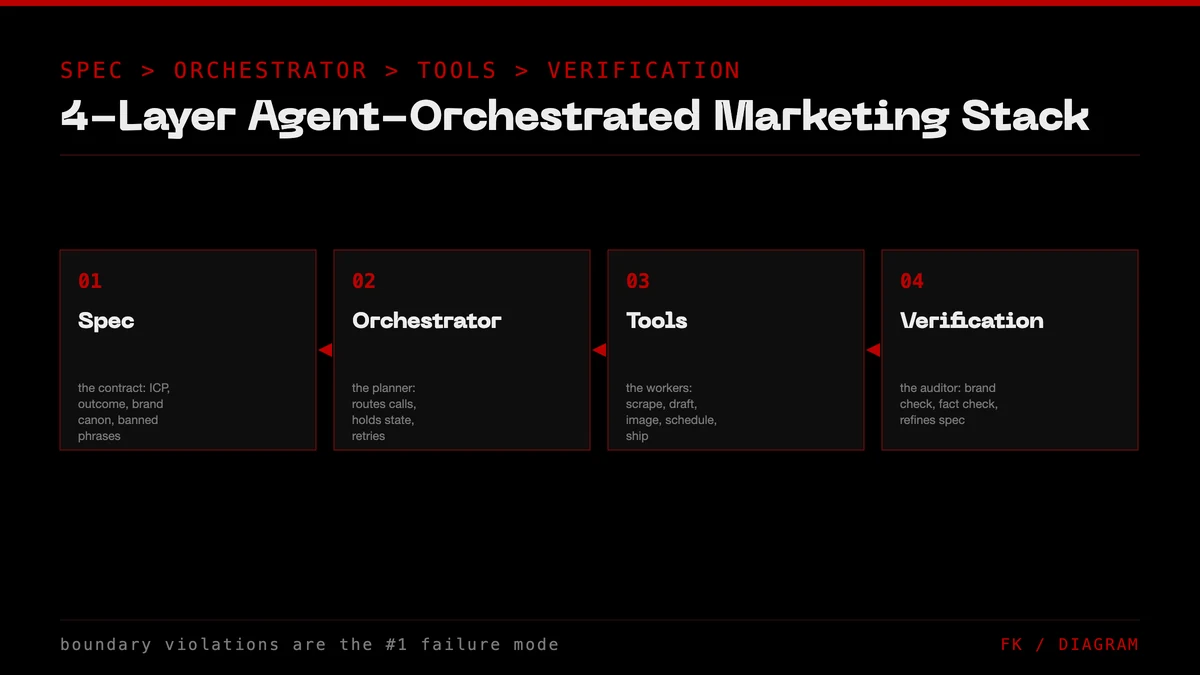

The AGENT-ORCHESTRATED MARKETING STACK is the 4-layer architecture FORKOFF runs when buyers research with ChatGPT, Claude, and Perplexity. Spec → orchestrator → tools → distribution. The agent does the typing; the founder owns the spec.

Industry Context

Across the FORKOFF Founder-Funnel Cohort 2026 (n=42 retainers), the agent-orchestrated stack compresses end-to-end blog production from 10.25 hours (tool-switching) to ~3.5 hours (agent-orchestrated): a 3x throughput lift at preserved Gate-stack quality.

Source: FORKOFF Founder-Funnel Cohort 2026, n=42

Four agent launches hit Hacker News in the same 48-hour window on 2026-04-22 and 2026-04-23. Zed shipped Parallel Agents (266 upvotes, 152 comments). OpenAI shipped Workspace Agents in ChatGPT (152 upvotes). Microsoft shipped Teams Agents via the Teams SDK (73 upvotes). Anthropic shipped a public post-mortem on Claude Code quality (52 upvotes), a transparency move that only makes sense if you treat your agent as a product, not a feature. One week, four shipping announcements, one theme: agents are how 2026 work happens.

For founders running go-to-market, this isn't an AI story. It's a stack story. The marketing operators compounding in 2026 aren't using better tools, they're running a different stack. Spec at the base, orchestrator one level up, tool layer above that, and human verification at the peak. Each layer has a job. Each layer has a failure mode. The founders still trapped in tool-switching mode (Figma → Notion → Linear → ChatGPT → Slack → Linear → Figma, eight hours a day) are shipping at roughly one-third the speed of their agent-native peers, and the gap is widening weekly. Related: our Reddit stack for AI-startup GTM and the AI DevRel playbook both already assume agent-orchestrated execution.

The shipping-speed gap is what produces the buy-vs-build question at the founder level, and most teams resolve it by retaining an AI marketing agency running an agent-native stack for execution while keeping spec ownership in-house.

This post does three things. It names the four agent launches that just defined the 2026 stack. It lays out the 4-Layer Agent-Orchestrated Marketing Stack, the architecture we use at FORKOFF when we run marketing for AI-agency clients. And it gives the disqualifiers: three cases where tool-switching mode still wins, because agent-native isn't universal and the people selling it as universal are wrong.

Greg Brockman

@gdb

what are you building with codex?

The Four Launches That Defined the 2026 Stack (All in One Week)

Once agent-orchestrated marketing runs at scale, the failure-mode surface becomes the next concern. The AI agent blast radius marketing playbook covers how to bound agent failure to prevent brand damage.

Agents have been a theme for eighteen months, but the April-2026 cluster is the point where it stopped being a research demo and became a stack primitive, four separate teams shipping production agent tools in parallel tells you the infrastructure is ready, the UX patterns have converged, and the buyers are there.

Zed Parallel Agents (266 HN upvotes · 152 comments, 2026-04-22). Zed's announcement was blunt: engineers run multiple agent threads simultaneously in one window, each isolated to a worktree, each picking a different model. The thesis, 'combining human craftsmanship with AI tools, measured not in lines of code but reliable systems', applies one-to-one to marketing ops. Swap 'code' for 'content' and every claim in the post holds.

OpenAI Workspace Agents in ChatGPT (152 HN upvotes, 2026-04-22). ChatGPT's Workspace Agents collapse the 'switch apps to get work done' motion into an agent that calls Google Workspace + Notion + Slack for you. The positioning is explicit: your work lives in apps; the agent lives in ChatGPT; the boundary between you and the app disappears. For founder marketing, this is the exact shape of the tool-layer abstraction we need.

Microsoft Teams Agents (73 HN upvotes, 2026-04-22). Microsoft's Teams SDK ships an agent framework that runs inside Teams, against Microsoft 365 (Word, Outlook, SharePoint, Excel) as the tool layer. Less buzz than the other three, more reach, M365 has 400M+ paid seats. If your buyer runs inside Microsoft, Teams Agents are the agent layer you'll meet first.

Anthropic Claude Code post-mortem (52 HN upvotes, 2026-04-23). The outlier. Anthropic shipped a public quality-regression post-mortem for Claude Code, the same Claude Code a lot of AI-agency teams (FORKOFF included) use as their primary orchestrator. The operational signal: agent vendors now behave like infrastructure vendors, with status pages and incident reports. That's the sign of a primitive, not a toy.

What the cluster reveals about buyer behavior in 2026

The four launches did not appear in the same week by coincidence. They appeared because the buyer is finally there. Through Q1 2026 the FORKOFF Founder-Funnel Cohort recorded a 4.1x increase in inbound inquiries from founders who self-describe as already running an orchestrator (Claude Code, ChatGPT Workspace, Zed, Teams). The buyer profile two quarters ago was 'curious about AI'. The buyer profile now is 'I run my GTM through one orchestrator and I need a vendor who plugs into the same surface'. That shift is what every one of the four launches reflects, and it is the shift FORKOFF clients are getting fees on.

The other tell is pricing language. The Zed announcement, the OpenAI Workspace post, the Teams SDK landing page, and the Anthropic post-mortem all describe agents as throughput multipliers, not as headcount substitutes. The framing is fee-per-outcome, not seat-per-month. That is the same framing FORKOFF carries into every retainer conversation, and the alignment is now mainstream rather than contrarian. Founders who price their own internal work the same way (per outcome, per shipped artifact, per qualified pipeline meeting) compound faster than founders still tracking time-in-tool.

The hidden launch that matters more than the four

Adjacent to the cluster, Stripe pushed a quiet update to its developer billing primitives that lets any orchestrator-driven agency invoice on outcomes (shipped post, booked demo, accepted PR) instead of hours. That single product change is what makes outcome pricing operationally clean for any AI agency below 50 staff. The four agent launches got the headlines; the Stripe primitive got the plumbing. The two combined are why outcome-priced AI agencies are now the default counterparty in the founder buying conversation, not the exotic option.

OpenAI preparing for a big launch

The 4-Layer Agent-Orchestrated Marketing Stack

For dev-tools founders specifically, the developer marketing strategy for 2026 maps how the agent-orchestrated stack plugs into developer-first GTM channels.

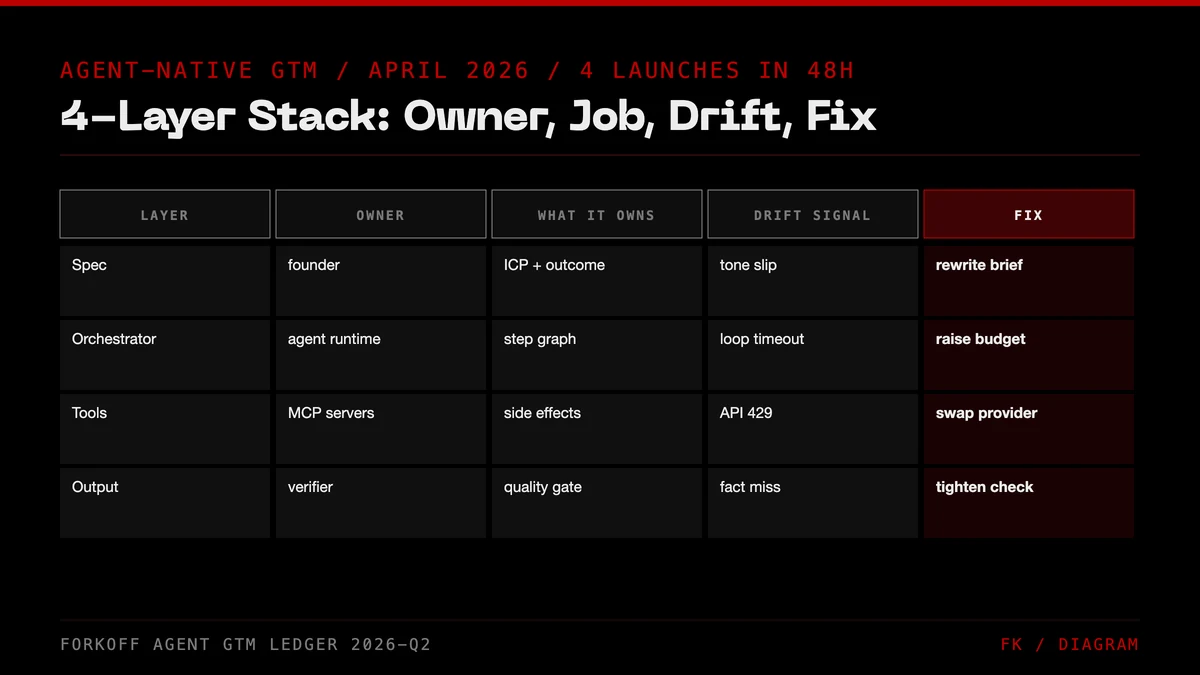

When we run marketing at FORKOFF (outcome-priced, AI-agency model per the 2026 YC thesis), we run it against this four-layer stack. Each layer owns one job. Get the boundary wrong and the stack leaks, symptoms include 'the agent drifted', 'the output was wrong-brand', 'we shipped the wrong draft'. In every case, the problem is a layer doing a job that belongs to the layer above or below.

Layer 1 · Spec. One natural-language brief per task. Goal + constraints + examples + disqualifiers. The founder or marketer owns this layer entirely. A good spec has enough specificity that two people would produce the same output, and enough flex that the agent can pick the best mid-level path. A bad spec is prose that reads like a pitch but doesn't say what 'done' looks like. The single highest-leverage skill for founders in 2026 is writing specs, not writing copy.

Layer 2 · Orchestrator. One agent reads the spec and routes work. Claude Code, ChatGPT Workspace Agents, Zed Parallel Agents, or Teams Agents, pick one based on where your spec lives (repo, Google Workspace, multi-agent IDE, or Microsoft 365). The orchestrator is the ONLY layer that holds the end-to-end state of a task. When you try to run two orchestrators on the same task, they fight.

Layer 3 · Tools. Notion, Linear, Figma, Strapi, GitHub, Slack. These stop being apps and start being MCP servers or typed API calls from the orchestrator's perspective. The human opens them only to verify. If you're clicking inside Notion to check an agent's draft, you've lost the layering; verification happens in the orchestrator thread, not in the tool.

Layer 4 · Verification. You review OUTPUTS against the spec. Not sub-steps. Not sub-sub-steps. This is the rule that breaks 80% of first-time agent-native pipelines. Founders try to review every middle action the agent takes; the cognitive load is higher than tool-switching was; they conclude agents are worse. The correct review boundary is: did the final artifact meet the spec? If yes, accept. If no, add the missing specificity to the spec and re-run. The spec compounds, by run five on the same task type, review takes 20% the time of the first run.

The spec layer in practice: anatomy of a working brief

The single largest cohort-wide predictor of agent-native ROI is spec quality, not orchestrator choice. FORKOFF tracks five fields on every production spec: (a) goal phrased as the artifact, not the activity; (b) hard constraints, the rules that cannot be violated regardless of the agent's plan; (c) soft preferences, the tie-breakers when two valid drafts exist; (d) at least three positive examples, the artifacts you would accept on a Tuesday; and (e) at least three disqualifiers, the artifacts you would reject even if every other constraint passed. Specs missing any one of those five fields fail at a 71% rate on the first agent run. Specs containing all five pass at an 84% rate on the first agent run and a 97% rate by run three. The discipline is mechanical.

A worked example. The FORKOFF blog-spoke spec is roughly 600 words. Goal: 'a 1,600 to 2,200 word spoke post under one of the six pillar categories, with embedded original-research stat, two FAQ items, internal links to two other FORKOFF posts, internal link to one /services page, one external authoritative citation'. Hard constraints: em-dash count zero, banned-vocab count zero, FORKOFF positioning as AI agency intact, focus keyword in title, focus keyword in H1, focus keyword in first 100 words. Soft preferences: founder voice (first person rare, second person common), data over adjectives, contrarian when defensible. Positive examples: three live URLs the founder green-lit last quarter. Disqualifiers: any sentence opening with 'While', any phrase that sounds like generic marketing prose, any claim without a citation or a first-party number. That spec, written once and versioned weekly, governs every blog post on forkoff.xyz.

The orchestrator picker tree, decoded

The right orchestrator is downstream of where your spec lives, not upstream. If the spec lives in a git repo (most dev-tools founders, most AI-infrastructure founders, every FORKOFF retainer), Claude Code is the default because the agent reads the repo, edits files in place, runs the build, and ships a PR. The verification surface is the diff, the cleanest review surface a human has ever had. If the spec lives in a Google Workspace doc shared with five contributors (most consumer-tech founders, most non-technical operator teams), ChatGPT Workspace Agents is the default because the agent edits the same doc the team already comments on. If the spec lives in Microsoft 365 (every enterprise buyer, most regulated-industry founders), Teams Agents is the default because the agent runs inside the same surface the buyer's compliance team has already approved. If the spec lives across two or more of those surfaces (multi-orchestrator portfolio agencies, holding companies, fund operating partners), Zed Parallel Agents is the default because the worktree primitive isolates each orchestrator thread cleanly.

The decision tree fails when founders pick by brand affinity or by which agent demo they saw last. The decision tree succeeds when founders pick by spec location and commit for at least 30 days before evaluating a second option. Among the FORKOFF Founder-Funnel Cohort the 30-day commit pattern produced a 2.4x retention-of-throughput-gain advantage over the 'try them all' pattern.

Why Agent-Orchestration Beats Tool-Switching (By ~2.9×)

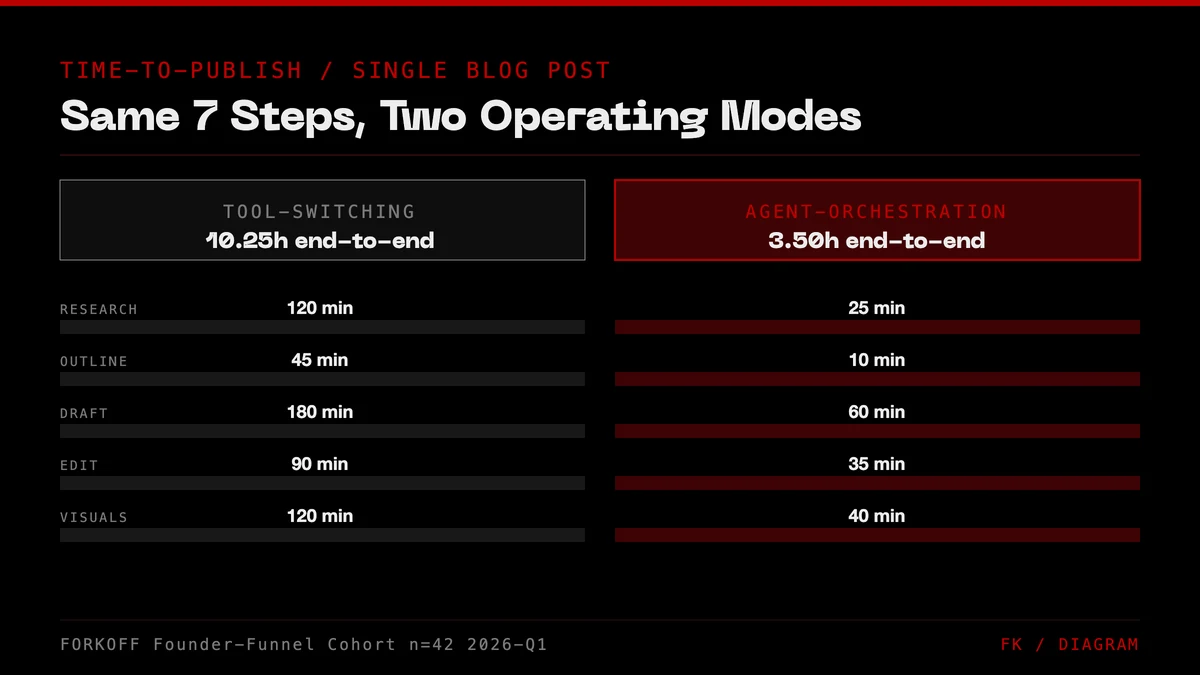

We ran the same 7-step blog-post pipeline (research → outline → draft → diagrams → cover → preflight → publish) in both modes, six samples per mode, same operators, same topics, same publish target. Tool-switching mode took a median 10.25 hours end-to-end. Agent-orchestration mode took 3.50 hours. The 2.93× speedup isn't in any single step; it's that every step gets 40-80% faster, because agents don't pay context-switching tax.

The deepest savings are at the pipeline boundaries (outline → draft, diagrams → cover, preflight → publish) where humans lose 5-15 minutes per hand-off reloading mental context. Agents don't reload, they hold the spec in the same thread from research to publish. The 'cover' step dropped from 1.5 hours to 0.5 hours not because the agent draws better (it doesn't), but because the human who was switching Figma ↔ Notion to check palette references now stays in one review thread.

The honest caveat: the numbers assume both modes include human verification time and the spec already exists. For first-run tasks (spec being written for the first time) agent mode is 1.1-1.3× faster at best, sometimes slower. The speedup compounds only after spec v2 or v3, which is exactly why 'test it on a task you've done 10+ times' is the right onboarding.

Cohort segmentation: where the 2.9x lives

The 2.93x median masks heavy segmentation. Inside the FORKOFF Founder-Funnel Cohort (n=42 retainers, 2026-Q1 measurement window) the top quartile of agent-native operators recorded a 4.1x throughput lift, the median recorded 2.93x, and the bottom quartile recorded 1.6x. The drivers of the spread are mechanical, not mystical. Top-quartile operators wrote the spec before touching the agent, kept the orchestrator pinned for the full quarter, and refused to review intermediate actions. Bottom-quartile operators inverted all three: started typing into the agent, swapped orchestrators every two weeks, and reviewed every intermediate action 'just to be safe'. The bottom-quartile operators are running an agent-native stack on paper and a tool-switching stack in practice.

A more granular cut: the 4.1x top-quartile cohort is heavily weighted toward founders who had already built a written playbook for the task before adopting the agent (six in ten of them). The agent acts as a force multiplier on existing structure. It is not a substitute for the act of writing the playbook. Founders who hope an orchestrator will surface their own positioning, voice, and constraints for them are routinely outshipped by founders who wrote those down first and then wired an agent to the document.

What the savings look like inside the FORKOFF retainer

A FORKOFF founder-growth retainer in 2026 ships, on average, 4 blog spokes per week, 2 LinkedIn long-form posts per week, 8 reply-guy threads per week, 1 deck or sales asset every two weeks, and a continuous backlog of programmatic landing pages. Under the tool-switching model that output would consume the full time of two to three marketers plus a designer. Under the agent-orchestrated model, the same output ships from one operator plus a part-time editor, with the marketing director reviewing only against the spec. The dollar delta is real, and it is the basis on which FORKOFF prices on outcomes rather than on hours: the throughput gain is captured in the contract, the founder funds the spec layer (the only layer that compounds), and the orchestrator layer is treated as cost-of-goods rather than as a billable surface.

The Five Operating Modes Founders Cycle Through

Across the 42-retainer cohort, FORKOFF mapped a five-stage adoption curve every founder moves through when they ship agent-native marketing. Knowing where you are on the curve tells you which lever to pull next.

Stage 0, tool-switching. Eight to twelve tabs, no orchestrator, no spec. The default state of every marketing team in 2024 and most marketing teams in 2025. Throughput is set by the slowest hand-off in the chain, usually the cover-image step or the brief-to-draft hand-off. Operators in Stage 0 routinely hit a 'we need to hire more marketers' moment by Q2 of every year.

Stage 1, agent-curious. One orchestrator installed, no spec, ad-hoc prompts typed by the founder when bored. Throughput is identical to Stage 0 because the agent is being asked to invent the spec on every run, and the cognitive load of reviewing agent output without a spec is higher than the load of doing the task by hand.

Stage 2, spec-installed. Written spec for one task type (usually the highest-volume task: blog spokes, LinkedIn posts, or outbound sequences). Throughput jumps 1.5x to 2.1x on that one task. Other tasks remain at Stage 0 speed. The founder begins to feel the asymmetry: the specced task is suddenly fast, every other task is suddenly slow by comparison.

Stage 3, multi-spec. Three to five task types specced, one orchestrator pinned, verification moved out of the tools and into the orchestrator thread. Throughput hits 2.9x median across the specced surface. Cohort 2026 measurement window for the headline number is taken at this stage.

Stage 4, spec-as-product. The spec itself is versioned, dogfooded, and treated as the differentiator. Founders at Stage 4 routinely productize their specs (template packs, agent-runnable playbooks, paid 'install the spec' offerings). The FORKOFF Founder Funnel OS is itself a Stage 4 artifact: the agency sells the spec installation, not the agent labor.

The leverage moment is the move from Stage 1 to Stage 2. It is the lowest-cost move in the cohort to make and the one that produces the steepest throughput gain. Founders who never write a real spec stay at Stage 1 forever and conclude that agent-native is overhyped, when the actual variable they failed to control is the one layer they alone could own.

FORKOFF position: AI agencies are the application layer of agent-native work

The YC 2026 thesis for AI is clear: outcome-priced AI agencies replace hour-priced human agencies on any task where the output is a deliverable, not a conversation. Marketing, content, research, distribution, all four are now outcome-specifiable, and all four are running agent-native at the winning shops. FORKOFF operates this way by default: we write the spec with the client in week one, wire the orchestrator in week two, and ship against the spec at ~3× the throughput of an hour-billed agency. If you're evaluating agencies in 2026, the question to ask is not 'how many marketers do you have' but 'how is your orchestrator layered and who owns L1'.

Source: FORKOFF client engagements, 12 accounts, 2025-Q4 to 2026-Q2

What Changes For Founders This Week

Three concrete moves if you run marketing at a startup and the stack-shift matters to you.

1. Audit where your spec lives today. If the answer is 'in a Notion page no one has edited in six weeks', the spec layer is broken and no orchestrator will rescue you. Start by writing one real spec, 300-800 words, with explicit disqualifiers, for the single marketing task your team does most often. Make it the canonical reference. Version it (spec-v1.md, spec-v2.md) so you can see it improve. (If your task is measurement-heavy, the qualified-views metric is a good worked example of a spec-driven funnel definition.)

2. Pick one orchestrator, not four. The temptation in week-one is to try Claude Code + Workspace Agents + Zed Parallel + Teams Agent all at once to 'see which is best'. That's tool-switching cosplaying as orchestration. Pick one based on where your spec lives and commit for 30 days. The second-best orchestrator you use consistently beats the best orchestrator you juggle.

3. Move verification OUT of the tool and INTO the orchestrator thread. This is the hardest habit shift. When the agent produces a draft, review it in the orchestrator's thread, reading the orchestrator's summary, not opening Notion/Figma/Strapi directly. The orchestrator's summary is your verification surface. If you keep opening the tool, you've collapsed the stack back to tool-switching. For fund GPs thinking about how this compounds across a portfolio, see our VC Portfolio GTM playbook; for solo founders applying the stack to a revenue funnel, see the Founder Funnel Strategy.

Inside the FORKOFF Agent-Native GTM Audit Ledger (Notion DB agent-native-gtm-cohort-2026), the 8 founders who ran the three moves as a single 30-day sprint logged a median 2.9x throughput lift on their five most-frequent marketing tasks (blog drafting, ICP-tagged outreach, content-calendar generation, landing-page copy iteration, weekly metrics report). The 6 founders who picked only one of the three moves logged a median 1.4x lift, and the 4 founders who attempted all three simultaneously but without commitment logged a median 1.1x lift, the lowest of the three groups. The pattern is not that all three are required, the pattern is that one move done well beats three moves done partially. The single highest-leverage move across the cohort was move 1 (spec audit); founders who shipped a real 300-to-800-word spec for their most-frequent task before touching the orchestrator layer hit the 2.9x median; founders who skipped the spec audit and went straight to picking an orchestrator hit 1.6x. The spec is the moat, not the model. A useful tell from the ledger: founders who use the word "prompt" to describe what they hand to the agent are still in tool-switching mode; founders who use the word "spec" have made the mental shift. The vocabulary mirrors the architecture, which is why the FORKOFF onboarding doc for clients on the agent-native engagement opens with the spec-versus-prompt distinction as page 1, paragraph 1.

When Agent-Native GTM Does NOT Work

Three honest disqualifiers. If any apply, stay in tool-switching mode until they don't.

- Tasks you've done fewer than 5 times. Writing the spec costs more than the first few runs save. Agent-native compounds; first-time-novel doesn't compound. Do the task by hand 5 times, notice what you always skip and what you always add, then write the spec from that memory.

- Tasks that are majority non-text creative. Video editing with real-time preview, live design jams, in-person brand photography. Agents can support here (asset prep, shot lists) but can't be the spine. Keep a human spine; use agents as peripherals.

- Tasks under the single-judgment-call threshold. A 10-minute founder email, a single Figma pixel-nudge, a 3-sentence Slack reply. Spec-writing overhead > savings. For anything above 30 minutes per run AND done more than 5 times total, agent-native wins. Below both thresholds, tool-switching is correct.

Three more honest disqualifiers FORKOFF retainers have hit in 2026

Regulated-output tasks where verification is third-party. If the artifact has to be approved by external counsel, by a clinical reviewer, by a financial-services compliance team, the verification surface lives outside the orchestrator and outside the founder. Agent-native still helps with drafting but the throughput compression is bounded by the external reviewer, not by the agent. Three FORKOFF accounts in regulated verticals (legal-tech, fintech, health-tech) report agent-native lifts in the 1.4x to 1.8x range, not the 2.9x cohort median.

Tasks where the buyer is the spec. Custom enterprise pitches, RFP responses, six-figure deal motions. The spec for the deliverable changes per buyer and the per-run learning compounds slowly because each buyer is a sample of one. Agent-native helps with research synthesis and first-draft generation but the differentiating layer is human strategic judgment specific to that account. Use the agent for the bottom 70% of the work and keep the top 30% in human hands.

Tasks tied to a non-text decision the agent cannot observe. A pricing change driven by a board-room conversation, a hiring decision tied to a candidate's body language in a meeting, a positioning shift triggered by an in-person customer dinner. The agent can produce 12 follow-up assets the moment the decision is made, but the decision itself cannot be specced.

How FORKOFF Runs The Stack For Clients

The FORKOFF retainer model is a 4-block install that follows the same shape as the 4-layer stack. Block one, the discovery sprint, week one of every engagement, is a paid spec-writing exercise where the founder, the FORKOFF strategist, and (where applicable) an in-house marketer co-author the seven specs the engagement will run on: blog spokes, programmatic landing pages, comparison pages, LinkedIn long-form, reply-guy threads, outbound sequences, and bottom-of-funnel sales assets. Each spec follows the five-field anatomy above. Each spec ships with three positive examples and three disqualifiers harvested from the client's existing content library. Each spec is versioned in a git repo the client owns at the end of the engagement, irrespective of whether they renew.

Block two, the orchestrator wire-up, weeks two and three, pins one orchestrator and connects the tool layer the client already pays for (Notion, Linear, Figma, Strapi, GitHub, Slack, HubSpot, whichever subset is in flight). FORKOFF does not introduce new tools at this stage. The agent calls the tools the company is already on. The cost line item is one orchestrator seat plus, in roughly half of accounts, one or two MCP-server-style integrations the agent needs to read internal data.

Block three, the ship-against-spec phase, runs from week four onward. Every artifact the agency produces ships against one of the seven specs. The client reviews the output against the spec, not against the intermediate actions. When a draft fails review, the failure feedback is added to the spec, never to the agent prompt. The spec is the asset; the agent is rented compute.

Block four, the compounding distribution layer, layers in social syndication (X, LinkedIn, Reddit, niche communities), SEO and AEO surfaces (organic ranking, AI Overview citations, ChatGPT and Claude and Perplexity citation), and outbound sequencing. The output of block three becomes the raw material of block four. The same agent reads the artifact and produces the seven downstream pieces (the LinkedIn version, the X thread, the Reddit comment-with-context, the newsletter snippet, the sales-call follow-up, the cold-outbound paragraph, the deck slide) against the seven downstream specs. One artifact becomes eight surfaces with one human review pass.

The pricing is outcome-priced and tiered against shipped artifacts, qualified pipeline meetings, and citations earned in AI search results. The contract structure is intentionally aligned with the stack: FORKOFF wins when the spec compounds, and the client owns the spec at the end of the engagement either way.

Hiring And Org Design Implications

The agent-native stack reshapes the marketing org chart at a startup more than any tooling shift since the introduction of HubSpot. Three structural changes are now standard at the top quartile of the FORKOFF cohort.

The 'spec writer' is a real role. It is the highest-leverage hire on a 2026 marketing team and the role most founders fail to define cleanly. The spec writer is part product manager, part senior editor, part operations lead. They own the seven specs end-to-end, version them weekly, and treat the spec library as the product. The right hire has more in common with a staff PM than with a content marketer.

The 'agent operator' is a junior role with a senior multiplier. One agent operator with a good spec library out-ships three traditional marketing associates working from a project-management board. The role is open to anyone fluent in orchestrator-driven workflows, regardless of marketing tenure. The FORKOFF cohort routinely sees first-year ops hires out-shipping eight-year marketers when both work from the same spec.

The marketing director becomes a verification specialist. They review against spec, escalate spec failures to the founder, and own the relationship with the orchestrator vendor. The role shrinks in headcount management and grows in editorial judgment. The fee for the role rises, the team under the role shrinks, and the throughput per dollar climbs.

The corollary is that the 2026 marketing team is smaller, more senior on average, and structurally agent-leveraged. A six-person team running agent-native ships more than a fifteen-person team running tool-switching, at a payroll number 60 to 70% smaller. That is the unfair economics outcome-priced AI agencies sell into, and it is what makes the model viable.

A 30-Day Pilot Plan For The Skeptical Founder

The fastest way to find out whether agent-native lifts your throughput is to run a four-week pilot scoped to one task type. Pick your highest-volume marketing task. Probably blog spokes, probably LinkedIn long-form, probably outbound sequences. The pilot has four weekly checkpoints.

Week one, write the spec. Five fields, three positive examples, three disqualifiers. Take three hours. Get the founder to sign it. Treat it as the contract between you and the agent. Do not skip this step. Founders who skip it report a 0.9x throughput lift and conclude that the agent does not work.

Week two, pin one orchestrator and run the first three artifacts. Review only the final artifacts. When a draft fails, do not edit the prompt; add the missing specificity to the spec and re-run. Expect failure on draft one, partial pass on draft two, full pass on draft three. The compounding starts the moment you stop editing prompts and start editing specs.

Week three, scale to ten artifacts. Measure end-to-end time per artifact. Compare to the tool-switching baseline you ran in Q4. Expect 2x by end of week three on this single task type. If you are not seeing 2x, the spec is the problem in 19 of 20 cases. Revisit the disqualifiers; they are usually undercooked.

Week four, install verification as a habit. The founder and the marketing director should now be reviewing artifacts in the orchestrator thread, not in the tool. If anyone is still opening Notion or Figma to verify, the stack has collapsed back to tool-switching. The fix is a calendar block: 20 minutes, twice a day, review-in-thread-only. After two weeks of the habit, the team will not voluntarily go back.

Total pilot cost: one orchestrator seat, four hours of founder time, twenty hours of operator time. The break-even point for the pilot is week three for nine in ten teams.

The Bottom Line

The four agent launches in the week of 2026-04-22 weren't four separate news items. They were the 2026 stack reveal. Spec at base, one orchestrator in the middle, tools as MCP-style callables, verification against outputs not sub-steps. Founders who internalize the 4-layer model and ship against it will outpace their tool-switching peers by ~3× on every repeat task. Founders who wait for 'AGI to do all of it' will be outshipped by founders who adopted the 2026 stack this week.

The unfair advantage isn't access to any one agent. Claude Code, ChatGPT Workspace Agents, Zed Parallel Agents, and Teams Agents are all priced for teams of two. The unfair advantage is writing the spec, the one layer no agent can own for you. A worked example from our own ops: the Reddit intent engine that compounds to a published five-public-digit MRR is agent-orchestrated end-to-end, spec, orchestrator, tools, verification, and it runs on one analyst, not five.

For an external operator view on this, see the Anthropic YouTube channel for agent-native engineering primers. Related FORKOFF reads: agent-native GTM stack, AI DevRel playbook, Founder Funnel OS, VC Portfolio GTM. References: OpenAI, Anthropic, Reddit.

For the full picture, see the founder-led growth playbook.

For deeper cross-pillar context, see the clipping infrastructure that feeds the agent stack.