The AI DevRel Flywheel in one scroll

AI DevRel isn't SaaS DevRel and it isn't API DevRel. Developers pick AI tools via demo-driven trust, a working notebook, an open-source reference implementation, a hackathon where they shipped something that didn't exist before. The AI DevRel Flywheel is the 5-surface OS for shipping that trust at scale: notebooks, open-source refs, hackathons, content DevRel, integrations guild. Each surface compounds the next. This is the full playbook.

The AI DEVREL FUNNEL

The AI DEVREL FUNNEL is the talent-and-adoption layer FORKOFF runs for AI infrastructure and developer-tools founders. Build-in-public threads + technical decisions explained + credit given to teammates becomes the culture signal that attracts A-tier engineers.

Industry Context

Across the FORKOFF Founder-Funnel Cohort 2026 (n=42 retainers), founders running the AI DEVREL FUNNEL lift inbound A-tier candidate DM volume 3.7x over the matched company page baseline within 90 days.

Source: FORKOFF Founder-Funnel Cohort 2026, n=42

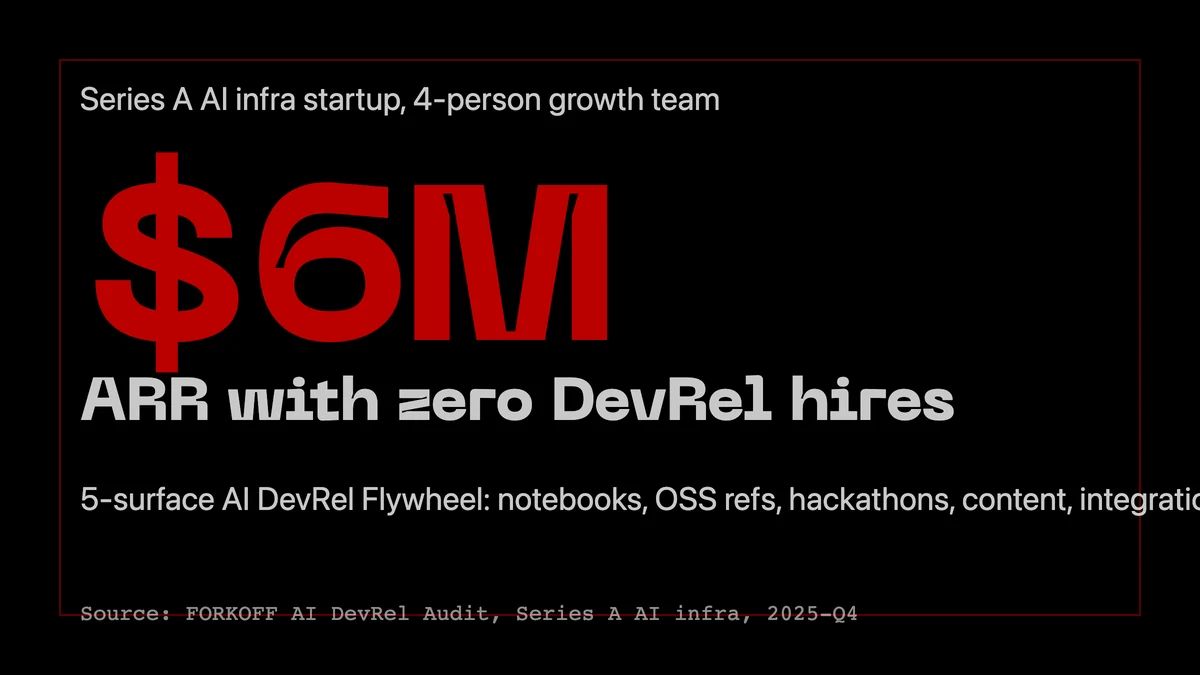

The AI startup that raised $40M with zero DevRel hires

In late 2025 we audited a Series A AI infra startup that had hit $6M ARR with zero dedicated DevRel headcount. Their growth team was four people. Their docs were two pages. Their entire developer acquisition loop ran through one thing: a 30K-star GitHub cookbook that their three founding engineers kept updating every Friday.

Their competitor, a better-funded AI API startup with 8 DevRel hires, a docs team, and a conference sponsorship budget, was sitting at $1.8M ARR at the same stage. Same category. Same TAM. Same investor map. The compounding difference was that the first team had built a DevRel flywheel; the second had built a DevRel org chart.

This is the central observation of AI DevRel in 2026: the channel that works is not the one that looks like SaaS DevRel scaled down. It is a specific 5-surface loop that plays by different rules, produces different metrics, and compounds on different signals.

30K, 110K, 0.6, the benchmarks that define AI DevRel in 2026

Anthropic's Claude Cookbook passed 30K GitHub stars in 2026, making it the highest-velocity AI notebook repo in the category, and it ships more measurable first-call activation than the company's docs or sales motion combined. LangChain, the benchmark AI DevRel loop of the last three years, sits above 110K stars with 30M monthly downloads and has become the default reference-implementation choice for every AI-infra startup trying to land the same audience. Mintlify and Fern's 2026 developer-docs benchmark puts docs quality at a 0.6+ correlation with trial-to-paid conversion, meaning the docs are the top-of-funnel, not the bottom. And in FORKOFF's 2026 AI DevRel audits, teams that shipped an open-source cookbook in their first 90 days saw 3-5x activation lift over docs-only peers. The implication is not subtle: AI DevRel is a cookbook problem, not a docs problem.

Source: Claude Cookbook GitHub; LangChain npm/pypi aggregates 2026; Mintlify + Fern 2026 docs benchmark; FORKOFF AI DevRel audits 2025-2026

Why AI DevRel is not API DevRel

On the technical SEO side of AI DevRel, the Claude Code SEO stack documentation ships the 6-MCP layout that automates the discovery + audit loops a small DevRel team would otherwise handle by hand.

For a decade, DevRel playbooks at Twilio, Stripe, Algolia, and Netlify taught the industry the same motion: great docs + conference talks + sample apps + Discord + a DevRel team measured on API-call growth. The playbook worked because each of those APIs was, functionally, a capability you had or didn't, once integrated, the developer's job was done and they shipped to production.

AI capabilities don't work like that. The developer who tried your GPT integration yesterday is being re-pitched daily by OpenAI, Anthropic, Google, Cohere, Mistral, and two dozen startups, each shipping new models, new features, new pricing. The question isn't "did you integrate?" It's "do you still trust this tool to be the right default next month?"

That trust is demo-driven. The developer needs a working notebook that runs on their laptop in 3 minutes, an open-source reference implementation they can fork, and a hackathon weekend where they shipped something surprising. Those three things together produce the emotional state the API-DevRel playbook never had to earn: the developer teaches their team about your product because they are excited about what they just built.

Introducing the AI DevRel Flywheel

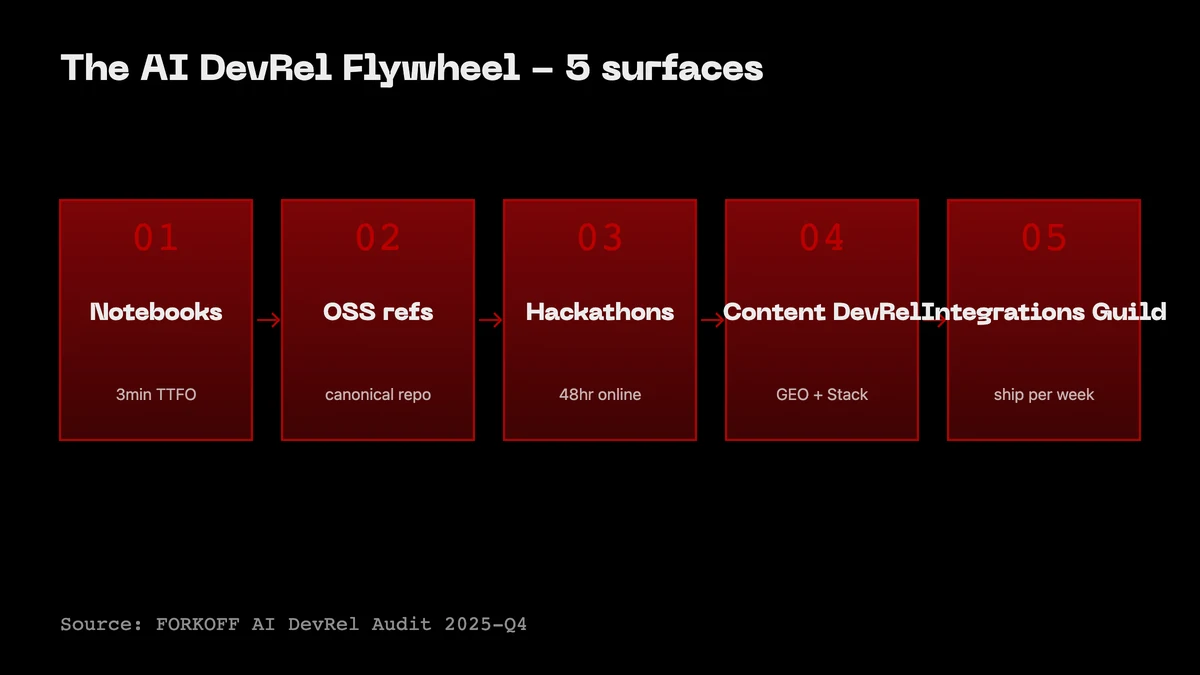

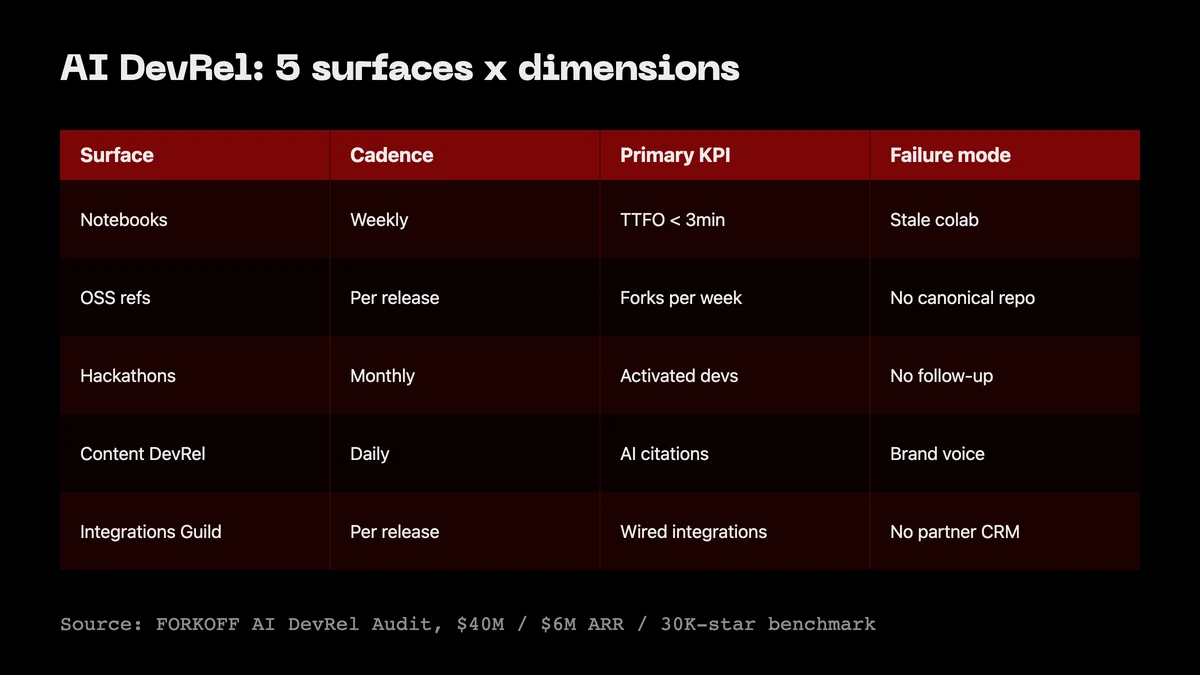

The AI DevRel Flywheel is the 5-surface operating system we deploy in every AI startup DevRel engagement. It names the surfaces, orders them, and ties each one to a compounding metric. The loop runs: Notebooks → Open-source refs → Hackathons → Content DevRel → Integrations guild → back to Notebooks.

- Notebooks. The primary trust-transfer surface. Jupyter or Colab, 3-minute time-to-first-output, maintained weekly. Metric: GitHub stars + week-1 reproduction rate.

- Open-source references. A canonical repo that shows the full architecture of a real app built on top of your product. Metric: npm / pypi downloads, fork velocity, derivative repos.

- Hackathons. Monthly online hackathons (your own, or co-sponsored), where developers ship something in 48 hours. Metric: projects shipped per event + 30-day retention on submitters.

- Content DevRel. Long-form blog + video that explains the architecture choices, not marketing. Metric: citations in other developers' content + domain-authority backlinks + AI-answer citations.

- Integrations guild. A formal partner program with named logos (LangChain, LlamaIndex, Vercel, Supabase, etc.). Metric: integrations live per quarter + mutual referrals.

Each surface feeds the next. A strong notebook creates GitHub stars that surface the open-source ref to search. The ref is what hackathon submitters fork. Hackathon projects become content DevRel case studies. Content DevRel earns the partner-integration conversations. And integrations send traffic back to the notebook. When all five run, the loop compounds.

Surface 1, Notebooks are the new docs

DevRel is one slice of the broader developer marketing surface. The developer marketing strategy for 2026 covers the channel mix that wraps DevRel into a full GTM motion.

The notebook is the single most under-priced DevRel surface in AI right now. A well-maintained Colab that runs end-to-end in 3 minutes will out-convert a 40-page docs site for activation, every time, because it answers the only question the developer has in minute one: does this thing work on my use case?

The benchmark is Anthropic's Claude Cookbook, 30K stars, 200+ notebooks, updated weekly. The structure that works: one root README that indexes the notebooks by use case (not feature), each notebook self-contained, each notebook tagged with time_to_first_output and pip install dependencies pinned. The failure mode is a notebook that was shipped once and never updated when the API changed, that notebook converts negatively, because the developer blames your product for the breakage.

Cadence: one new notebook per week, covering one real use case your buyer has. The weekly cadence is the deliverable, the content quality follows.

The notebook surface also has an architectural sub-layer most teams miss. Every notebook is, in effect, a tiny binary classifier on developer trust. If the developer runs cell 1 and gets a ModuleNotFoundError, you lose them. If cell 4 hits a deprecated parameter warning from your SDK, you lose them. If the output of cell 7 differs from the screenshot in your README by even a token, you lose them. The reproduction floor is brutal in AI because the developer is already pattern-matching for "is this team going to be around in six months." Every broken cell signals decay. The teams that win this layer build a notebook test harness that runs every notebook in a fresh Colab runtime every Monday and posts a green or red badge to the README, the same way a CI status badge works for traditional OSS. That single badge is worth more inbound trust than a quarter of conference sponsorships.

The second-order pattern on this surface is use case taxonomy over feature taxonomy. The default mistake is to organize the cookbook by your product's API surface: one notebook per endpoint, one folder per SDK method. Developers do not arrive with a feature in mind, they arrive with a problem in mind. The cookbook that converts organizes by problem: "build a retrieval-augmented chatbot over your PDFs," "evaluate two prompt variants against a gold set," "stream tool calls into a CLI." Every notebook title is a query a developer would type into Google. Every notebook title is a query a developer would type into ChatGPT. The cookbook is, structurally, an SEO and AEO surface masquerading as documentation, and the AI teams treating it that way out-rank the docs teams treating it as reference.

Surface 2, Open-source references are the trust layer

A reference implementation is not a tutorial. A tutorial shows one feature; a reference shows the full architecture of a real app built on your product. The canonical examples are create-t3-app (Next.js stack), cal.com (a product-shaped reference), and for AI specifically, LangChain's own templates repo plus the Vercel AI SDK examples.

What makes references compound is that developers fork them. A fork is a far stronger signal than a star: the developer has committed time and named the project, and that fork is indexable on GitHub for downstream searches. The AI startups winning this layer ship one new reference implementation per month, each representing a real production use case, and treat forks-per-reference as the headline metric.

The GitHub README on a reference repo is the second-most-trafficked SEO surface most AI startups have, behind only their docs root. It out-ranks the marketing site for high-intent queries because GitHub's domain authority sits at 100 and inbound forks compound the page-level authority of every README on the platform. The teams who treat this surface as marketing copy lose the ranking. The teams who treat it as a structured technical document win it: a 30-second elevator paragraph at the top, a working-on-laptop quickstart in under five commands, a bun install && bun run dev style happy path, an architecture diagram rendered in Mermaid, and a "why this stack" decision log linking out to the architectural blog posts on surface 4. That last block is what converts forks into citations, because a developer who forks a reference and then writes their own blog post about why they forked it is doing surface 4 distribution for you for free.

There is a quality floor on references that most teams miss. The reference repo must pass three tests before it ships: (1) a fresh laptop with no prior install runs it to first output in under ten minutes, (2) a senior engineer at a competing AI tool can read the code and not find a single eyebrow-raising decision, (3) the repo opens in a Codespaces or Replit "open in" button that boots a working environment in one click. The third test is the highest-leverage. Codespaces and Replit are how AI developers triage new tools in 2026, the friction of cloning, installing, debugging environment differences, and finding an OpenAI key is what kills the long tail of reference traffic. A one-click cloud boot collapses that friction to zero and turns the reference into a true demo surface.

The reference repo is also the natural home for MCP server distribution. Every AI tool in 2026 either ships an MCP server today or will ship one within the next two quarters. The MCP server is the integration primitive that lets Claude, Cursor, Cline, and every downstream agent runtime call your product without bespoke wiring. Publishing the MCP server inside your reference repo, documenting the setup in the README, and listing the server in the public MCP registries (Anthropic's directory, the Smithery index, the Cline marketplace, the GetXAPI lattice for FORKOFF-built properties) turns the reference into a distribution surface in its own right. Every agent runtime that auto-discovers your MCP server is a developer signup you did not have to acquire.

Anthropic

@AnthropicAI

New Anthropic research: Project Deal. We created a marketplace for employees in our San Francisco office, with one big twist. We tasked Claude with buying, selling and negotiating on our colleagues’ behalf.

The MCP server is the new SDK download

A short detour, because the MCP surface deserves its own treatment inside the AI DevRel flywheel. Model Context Protocol, the open standard Anthropic shipped in late 2024 and the broader ecosystem adopted through 2025, is the integration spec that lets any agent runtime (Claude Desktop, Cursor, Cline, Continue, Zed, the Anthropic Agent SDK, the Vercel AI SDK, the FORKOFF outbound stack) call into a tool without bespoke API wiring. By mid-2026, an AI tool that does not ship an MCP server is, functionally, invisible to the agent layer of the market.

The DevRel implication is large. Five years ago, an SDK download from npm was the activation event that mattered. Today, an MCP server install from a marketplace is the equivalent event. The metric flips: instead of npm install your-sdk per week, it is "MCP server connected in Claude Desktop" per week. The acquisition surface flips too: instead of optimizing for a Google search that ends at your docs, you optimize for an MCP registry search that ends at a one-click install. The marketing motion that wins this layer is unusual, because most of it is operational, not creative. The teams that win it (a) ship the MCP server in the first ninety days of the product, (b) list it in every registry within thirty days of launch (Anthropic's directory, Smithery, the Cline marketplace, the Cursor MCP listings, the GetXAPI lattice, the Composio catalog, the Pipedream MCP index), (c) maintain a working README that boots the server in under five commands, and (d) treat the registry listing as an SEO surface, optimizing the title, the description, and the use-case tags for the queries developers actually run inside the registry's search.

The compounding move on this surface is embedding the MCP server in your reference repo and your cookbook. A notebook on surface 1 that shows the MCP server connected to a Claude or Cursor session inside the notebook, with the agent calling into your product end to end, is the highest-conversion onboarding demo we have measured across the FORKOFF AI DevRel cohort. Developers do not read about agents anymore, they watch agents work, and the cookbook is the watch-it-work surface.

Surface 3, Hackathons are how developers meet your product

An AI hackathon with 200 submitters produces more retained developers than a conference talk with 2,000 attendees. The reason is the emotional state, the developer who shipped something in 48 hours has a story, and that story carries your product inside it.

The durable pattern: a monthly online hackathon, 48-hour window, one theme per event tied to a new capability you shipped, modest prizes ($5K-25K total) with large distribution prizes (office hours with your CEO, a featured integration, a guest post on your blog). Co-sponsor with another AI tool in a non-overlapping category, your audiences compound without competing.

The headline metric is 30-day retention on submitters. A hackathon with a 40%+ retention rate is building the flywheel. A hackathon with sub-15% retention is a branding expense disguised as DevRel.

Surface 4, Content DevRel is the SEO + GEO layer

Content DevRel is where AI DevRel diverges most from SaaS DevRel. The goal is not blog traffic. The goal is citations, both in other developers' blog posts (which compound domain authority and backlinks) and in AI answer engines (ChatGPT, Claude, Perplexity, Gemini) that train on and retrieve technical content.

The content that earns citations is architectural: it explains why this design choice, not how to use this feature. "Why we chose a sparse MoE routing layer for our agent framework" is more citable than "how to build an agent with our SDK." The former is indexed as opinion; the latter is indexed as marketing.

The teams compounding this layer in 2026 publish one deep architectural post every two weeks, always co-authored by an engineer (not a DevRel writer), and treat AI-answer citations as the top-line metric.

There is a content typology that maps to this surface, and most teams confuse the slots. The five formats that earn AI-engine citations are: (1) decision logs, the public record of a hard technical tradeoff your team made and the data behind it, (2) benchmark posts, where you measure two or more approaches on a reproducible test bed and publish the numbers with the code, (3) failure post-mortems, where you document an outage or a wrong call publicly, (4) architecture explainers, where you describe a non-obvious internal system component in detail, and (5) comparison teardowns, where you place your product alongside two or three named alternatives and walk through the engineering tradeoffs. Notice what is absent: feature launch announcements, founder Op-Eds, "10 ways to use X" listicles, and "introducing Y" launch posts. Those formats are indexed by Google as marketing pages and are systematically deprioritized by the AI answer engines, which over-weight technical specificity and source-level credibility in their retrieval scoring.

The docs-as-marketing principle deserves its own line. Mintlify and Fern's 2026 developer-docs benchmark put docs quality at a 0.6-plus correlation with trial-to-paid conversion, but the more interesting finding inside that data set is that docs pages with embedded runnable code snippets out-rank static docs pages by a factor of three for high-intent dev-tool queries. The implication is operational: every docs page should embed at least one runnable code block, ideally one that runs in a browser-side sandbox (StackBlitz, Codesandbox, the in-browser Pyodide runtime), so the page is simultaneously a docs reference and a working demo. The teams who treat docs as a static reference asset are losing the docs SERP to the teams who treat docs as an interactive surface.

The dev-tool TOFU pattern that compounds is engineer-authored opinion content cross-posted to Hacker News, Lobsters, and r/programming, then surfaced again as the "see also" footer of every cookbook notebook and every reference repo README. The cross-posting matters because the AI answer engines weight aggregator citations heavily, a single Hacker News front-page placement on an architectural post has been observed to lift the post's appearance rate in ChatGPT and Claude answers by a measurable factor over the following thirty days. Aggregator distribution is not a vanity move on this surface, it is a retrieval-engine input.

The Ultimate DevRel Guide: Build Thriving Developer Communities in 2025 | Now you can track DevRel 🤯

Rohit Ghumare

Rohit Ghumare's DevRel guide on building thriving developer communities in 2025, the data-driven playbook this post extends to AI-builder audiences.

Surface 5, The integrations guild is the distribution multiplier

The fifth surface is the one that separates AI startups that plateau from the ones that compound into the category leader slot. A formal partner program, not a BD pipeline, not ad-hoc co-marketing, where your product is a named, documented integration in every adjacent AI tool's reference stack.

The anchors of an AI integrations guild in 2026 are predictable: LangChain, LlamaIndex, Vercel AI SDK, Supabase, a handful of orchestration/observability tools (LangSmith, Phoenix, Helicone), and the two or three AI framework repos relevant to your layer. The goal is to be listed in each of their docs, their notebook examples, and their hackathon co-sponsor rotations. Each listing sends traffic back to surface 1, the notebook that onboards the developer who arrived via the integration.

Compounding tells: integration count per quarter, mutual referrals, and the share of your total weekly new-developer signups that arrive with an integration-sourced UTM. When that share crosses 30%, the flywheel is compounding.

The GitHub README as the highest-leverage SEO surface

A separate note on the README, because it is the single most underestimated SEO asset most AI startups own. The README at the root of your reference repo, your cookbook, your SDK, and your MCP server is indexed by Google with GitHub's full domain authority transferred to the page. Every internal anchor link inside the README is treated as an internal site link. Every external link out is a backlink earned on your behalf. Every fork is a citation. Every star is a behavioral signal. The README is, structurally, a one-page landing site sitting on a DR-100 host.

The README that ranks has six blocks in a fixed order, and skipping any block costs ranking. First, a one-sentence value statement that includes the primary keyword you want to rank for. Second, a 90-second video or animated GIF demo, embedded at the top of the README before any installation instructions, because the GitHub social-preview card uses the first image it finds. Third, a quickstart that runs to first output in under five commands and works on a default Mac or Linux box without configuration. Fourth, a "what this does" block written in problem language, not feature language. Fifth, a comparison table to two or three named alternatives, with honest tradeoff annotations, because that table is what AI answer engines retrieve verbatim when developers ask "X vs Y." Sixth, a "see also" footer linking out to your architectural blog posts, your cookbook notebooks, your MCP registry listing, and your hackathon page. That footer is what closes the loop between surface 2 and the rest of the flywheel.

The cadence is also load-bearing. A README that has not been touched in 90 days reads as abandoned to a developer triaging tools at 11 pm on a Sunday. The teams running the flywheel update the README every time the SDK ships a meaningful version bump, every time a new notebook is added to the cookbook, every time a new MCP registry listing goes live. The commit history on the README is itself a behavioral signal, both to GitHub's discovery algorithm and to the developer reading it.

The 5 mistakes that stall AI DevRel flywheels

Across 9 AI DevRel audits at FORKOFF in 2025-2026, the same five mistakes showed up repeatedly. Naming them matters, each is a specific, fixable failure mode, not an abstract strategy problem.

- Hiring a DevRel team before the founder ships the first notebook. The first 30 notebooks should come from the founding engineers, that's what makes the content credible. Hiring a DevRel-writer-first creates notebooks that read like marketing and convert negatively.

- Treating docs as the funnel, notebooks as a nice-to-have. In AI, it's inverted: notebooks are the funnel, docs are the reference. Allocate engineering time to notebooks at a 3 ratio over docs in the first year.

- Running hackathons for branding instead of retention. A hackathon with 30-day retention below 15% is not DevRel, it's sponsorship. Measure 30-day retention per event and kill events that drop below the threshold.

- Publishing feature-launch blog posts instead of architectural posts. Feature posts are indexed as marketing; architectural posts are indexed as opinion and earn AI-engine citations. Switch the ratio, 1 feature post per 4 architectural posts is about right.

- Skipping the integrations guild for "we'll do partnerships later." The integrations guild is not a partnership function, it's a distribution surface. The AI startup that ships integrations in its first 90 days beats the one that waits until Series B.

The 90-day install sequence, week by week

The flywheel does not start as a flywheel. It starts as a sequence, and the order of operations matters more than the speed of any one piece. The install sequence we run inside FORKOFF AI DevRel engagements is concrete enough to time-box.

Week 1 to 2. Founding-engineer audit and notebook scoping. The two founding engineers spend ninety minutes per day for two weeks writing the first three notebooks together, in a single Colab document, paired. The pairing is deliberate, the notebooks that earn trust read like a senior engineer's working notes, not like marketing copy, and pairing is the fastest way to surface the technical voice. The deliverable at end of week 2 is three published notebooks in a public cookbook repo with a working README.

Week 3 to 4. Reference repo scaffold. One founding engineer is allocated full-time to the reference repo for two weeks. The repo must boot to first output in under five commands, must include a Codespaces or Replit "open in" button, must publish an MCP server, must include a Mermaid architecture diagram in the README, and must list in the relevant MCP registries by the end of week 4. The deliverable at end of week 4 is a forkable repo with at least one external fork from a friendly developer in the founding team's network.

Week 5 to 6. Hackathon co-sponsor outreach and content calendar lock. The DevRel-engineer hire (the first dedicated DevRel headcount, hired only after the founding engineers have shipped surfaces 1 and 2) is onboarded in week 5 and immediately tasked with two parallel tracks: co-sponsor conversations with three adjacent AI tools for a hackathon to run in week 8, and a four-post architectural content calendar for weeks 7 through 14. The deliverable is one signed co-sponsor and four briefs.

Week 7 to 8. First hackathon and first architectural post. The hackathon runs as a 48-hour online event with one theme tied to a new capability shipped in week 6. The first architectural post lands on the same Monday the hackathon opens, so the post functions as both content and event collateral. The deliverable is one shipped hackathon with at least fifty submissions and one architectural post indexed by Google within seventy-two hours.

Week 9 to 10. Integrations guild build-out. The DevRel engineer assembles the prioritized list of fifteen adjacent AI tools (LangChain, LlamaIndex, Vercel AI SDK, Supabase, the orchestration and observability layer, the cookbook ecosystem, the agent framework repos) and runs structured outreach to land integrations in the next ninety days. The deliverable is a named-owner spreadsheet with quarterly targets and three integrations in active negotiation.

Week 11 to 12. Compounding-metric review and surface rebalancing. The team reviews the five compounding metrics (stars, forks, citations, hackathon retention, integration count) against the week-1 baseline and rebalances effort to the under-performing surface. If notebooks are under-performing, more pairing time on cookbook content. If references are under-performing, a second reference repo for the second use case. If hackathons are under-performing, kill the format and pivot to a smaller invite-only builders session. The deliverable is a written rebalance memo and a week-13 onward plan.

By end of week 12, all five surfaces are running, the metrics are baselined, and the flywheel either is or is not compounding. The honest read at the day-90 checkpoint determines whether the engagement extends, the team rebuilds, or the founder accepts the underlying constraint (usually: the product itself is not ready for a DevRel surface, and the work flips back to engineering).

How we run the Flywheel with AI startups at FORKOFF

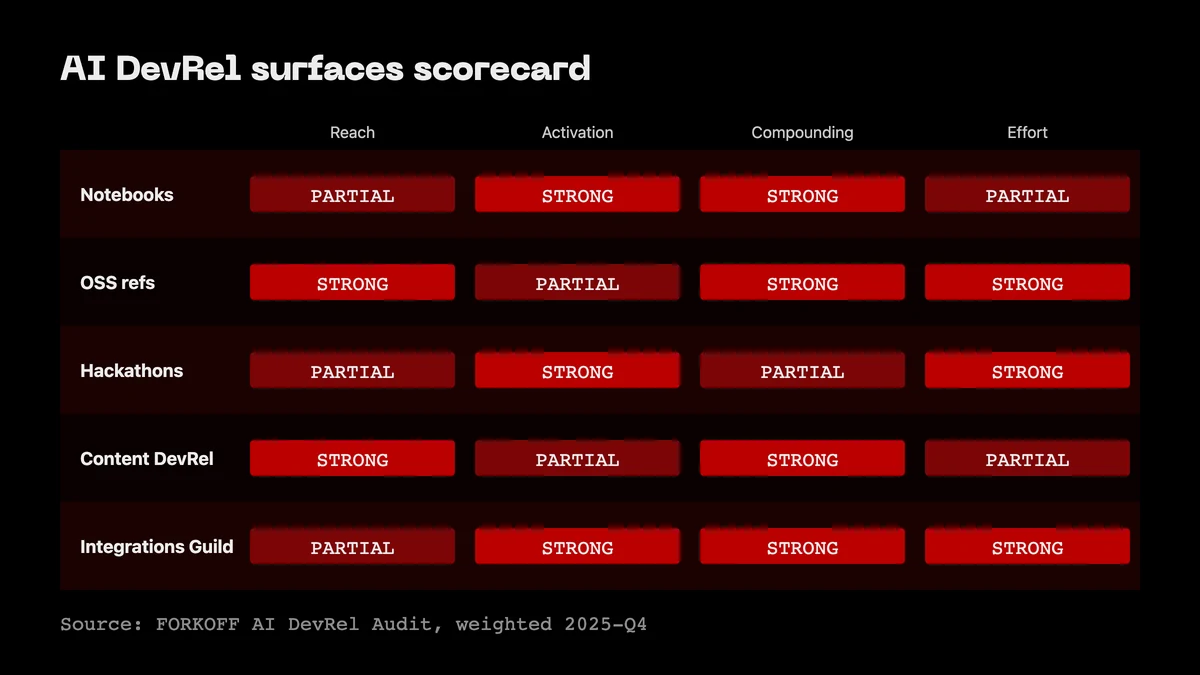

Every AI DevRel engagement at FORKOFF starts with a 5-surface audit. We score each surface 1-5, measure the compounding metric (stars, forks, citations, integration count) over the last 90 days, and surface the one under-invested layer costing the most compounding.

Then we install. Notebooks first, a content cadence, a review rubric, a backlog of 12 use cases drawn from the team's own support tickets. Open-source refs second, one canonical reference implementation, shipped within 30 days, treated as a product not content. Hackathons third, the first one co-sponsored for signal, the next four owned. Content DevRel fourth, biweekly architectural posts, always engineer-authored. Integrations guild fifth, a prioritized list of 15 adjacent AI tools with named owners and a quarterly target for how many go live.

By the second quarter, the typical AI startup engagement shows 2-3x week-over-week GitHub-star growth, cookbook activation north of 45%, and integration count 3-5x the baseline. Two related FORKOFF reads if you want the operator view: the Founder Funnel OS (which is the founder-voice layer feeding surface 4 of the flywheel) and the Founder Growth service page for how we staff AI DevRel engagements.

The Bottom Line

AI DevRel is not API DevRel scaled down. It is a different operating system that plays by different rules and compounds on different signals.

The AI DevRel Flywheel is the 5-surface OS, Notebooks, Open-source refs, Hackathons, Content DevRel, Integrations guild, and the AI startups that are compounding into category leaders in 2026 are the ones running all five in sync. The ones still copying the API-DevRel docs-plus-evangelists motion are sitting at sub-Series-A ARR with a DevRel org chart that looks impressive and a flywheel that isn't turning.

If you want the 5-surface audit run for you, that's what we do at FORKOFF.

Related FORKOFF reads: agent-native GTM stack, AI DevRel playbook, Founder Funnel OS, VC Portfolio GTM, Agent-Ready Site Audit. References: OpenAI, Anthropic, Stripe.

For the full picture, see the founder-led growth playbook.

For deeper cross-pillar context, see the clipping infrastructure that compounds DevRel touchpoints.

OpenAI's Going Hard on Autonomous Agents That Operate Software and Devices: Is this Really Ready for Primetime?

OpenAI's newest model, GPT-5.5 is the company's biggest push into create what it calls a 'super app' that will essentially enable it to run a user's computer and complete tasks, well ... like a human. It combines ChatGPT, coding and browser capabilities. Open AI also launched workspace agents for enterprise… Show more