TL;DR

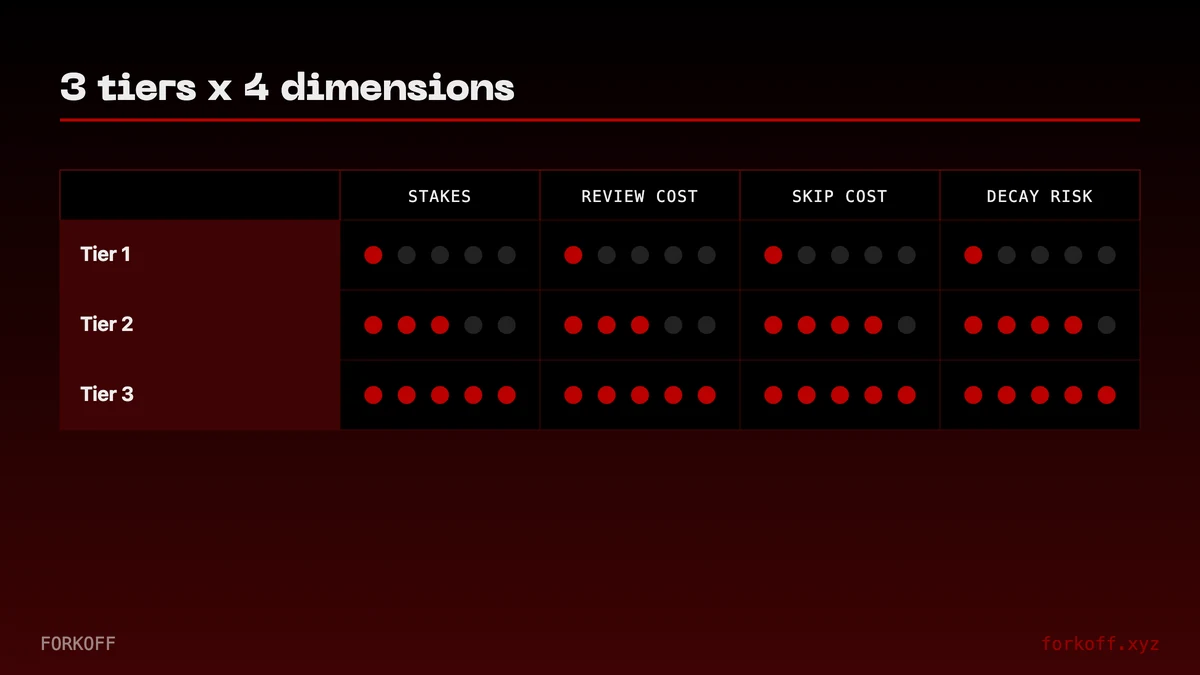

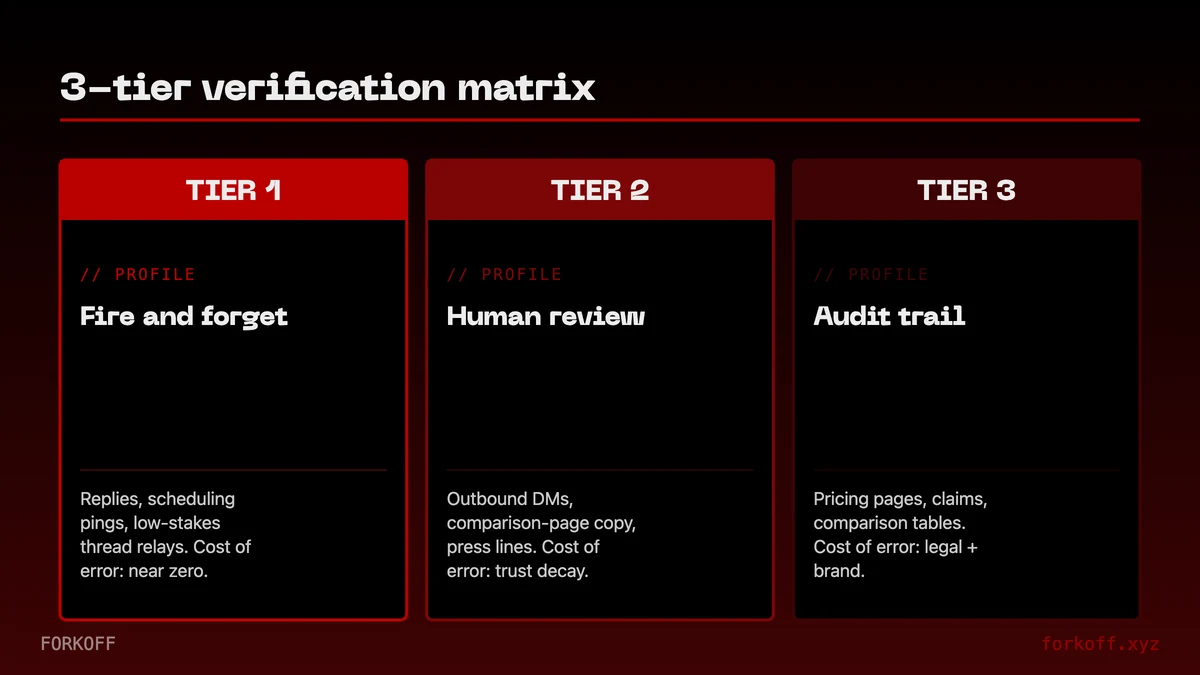

Agents now draft your blogs, your cold emails, your Reddit replies. Not every output needs the same review gate. Tier 1 (fire-and-forget) ships direct. Tier 2 (human-review) gets a draft + accept-before-ship. Tier 3 (audit-trail) gets drafted, reviewed, and recorded. Confuse the tiers and trust collapses in months, not because agents are bad, but because high-stakes work got low-stakes verification.

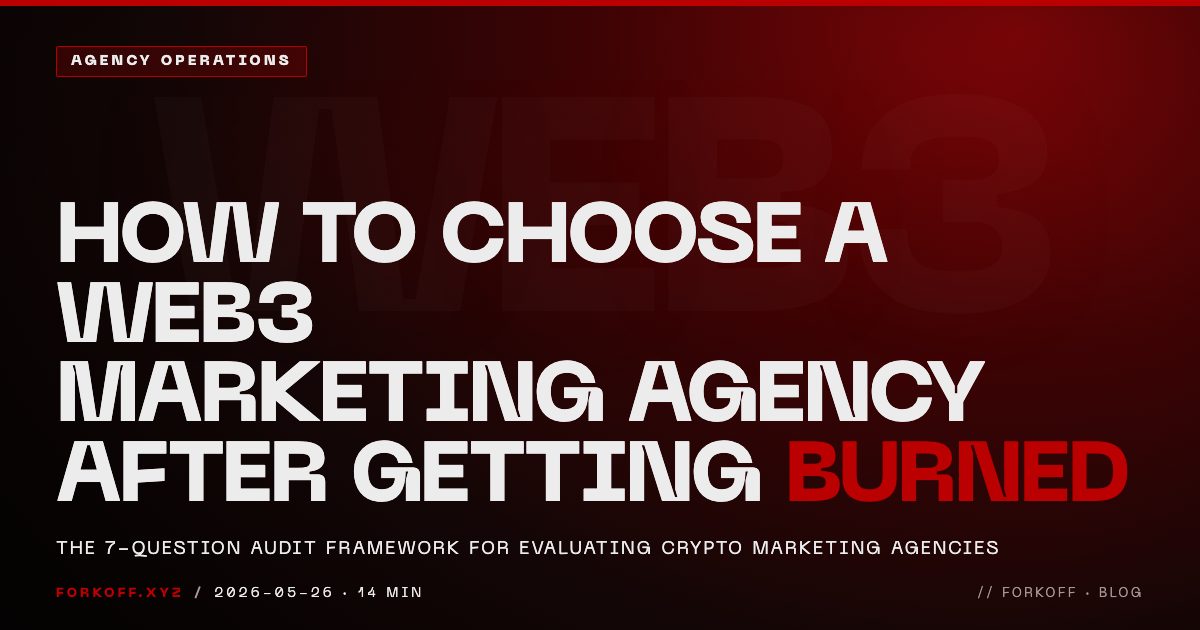

The 3-TIER VERIFICATION MATRIX

The 3-TIER VERIFICATION MATRIX is the AI-marketing safety lattice FORKOFF runs for founders shipping content at scale. Tier-1 fire-and-forget for reversible outputs. Tier-2 human-in-the-loop for mid-stakes work. Tier-3 audit-trail for regulated or high-stakes claims.

Industry Context

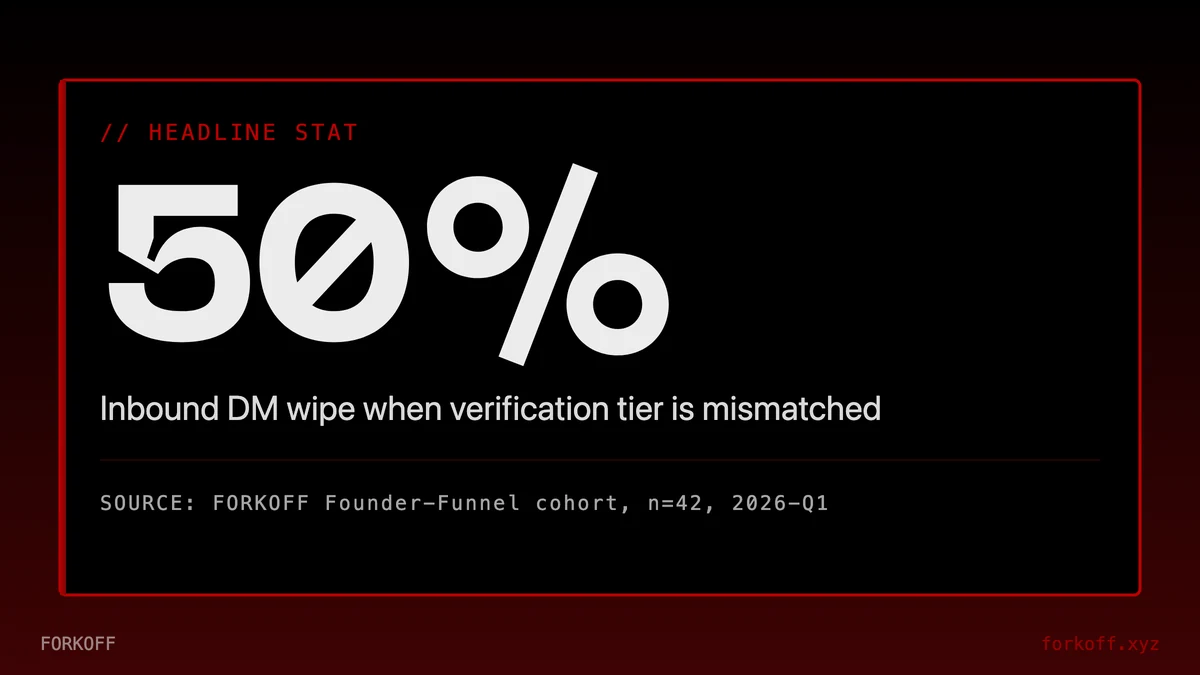

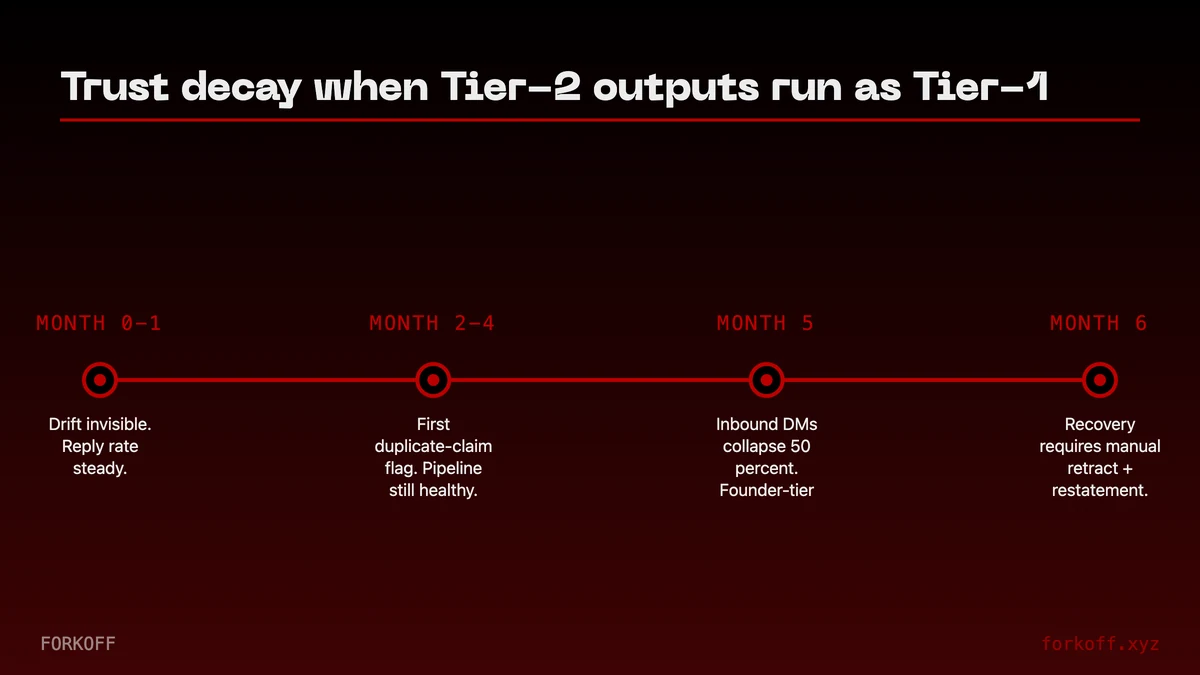

Across the FORKOFF Founder-Funnel Cohort 2026 (n=42 retainers), content treated as Tier-1 when it should have been Tier-2 produces a six-month trust-decay curve that wipes 50% of inbound DM volume; the matrix prevents that decay before it starts.

Source: FORKOFF Founder-Funnel Cohort 2026, n=42

Your agent just wrote three blog posts, twelve cold emails, and a LinkedIn reply that mentions a client by name. Which ones can ship unsupervised, which need a human review before going out, and which need a paper trail you can defend in six months if something goes wrong? If the answer is 'I'll figure it out when something breaks', trust will break before you figure it out.

The mistake most founders make with agent-native GTM isn't picking the wrong agent or the wrong tools. It's applying the same verification gate to every output. Letting an agent ship cold-email drafts the same way it ships Reddit replies feels efficient, until the agent includes an unsubstantiated claim in a cold-email sequence and the first reply from the prospect is 'can you prove that'. Now you're sourcing backup for a claim you never actually made, and the buyer's read on your company is 'they let a model write that'.

This post lays out the 3-Tier Verification Matrix, the framework we run at FORKOFF when we operate marketing for AI-DevRel clients and AI startup GTM accounts. It names which outputs go in each tier, what the review gate looks like per tier, and, critically, the failure modes you get when you treat Tier-3 work like Tier-1 work.

Founders who do not want to operate the tier gate in-house typically delegate it to an AI marketing agency operating the tier gate as part of the engagement, which is the cleanest way to keep Tier-3 outputs from leaking into a Tier-1 pipeline.

OpenAI

@OpenAI

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex.

The 3-Tier Verification Matrix

Every agent output falls into exactly one of three tiers. Which tier is determined by one question: what's the worst case if this ships wrong?

Tier 1 · Fire-and-forget. Agent ships direct. Worst case is lower-cost and reversible, delete + resend, rearchive, unsend. Reddit DMs, X replies, inbox triage, routine Slack acknowledgments. The review gate is NONE. The failure mode is tolerable. Spec says 'ship', agent ships. The founder's time is worth more than the tiny probability of a small miss.

Tier 2 · Human-review. Agent drafts. Human accepts or rejects before ship. Blog drafts, cold-email sequences, founder-voice LinkedIn posts, outbound to mid-tier accounts. Review gate is a 2-minute read per draft; rejection triggers a spec revision. Failure mode if you skip: wrong-brand voice, spam-filter trips, subtle cite-drift that erodes authority. These aren't catastrophic individually, they accumulate. Skipping Tier-2 review for a month looks fine. Skipping for six months explains why your brand voice feels like someone else.

Tier 3 · Audit-trail. Agent drafts + human reviews + a recorded paper trail. Claims in ads, case studies, PR, quote attributions, pricing-page copy, anything legal or regulatory adjacent. Review gate is structured, reviewer name, diff reviewed, approval timestamp, retained for 12+ months. Failure mode if skipped: FTC exposure, client relationship termination, public retraction. One Tier-3 miss that lands publicly costs more than a year of Tier-2 reviewer time.

So, this week claude wiped agentic AI startups with a new update. Also, as they have mythos now, they will ship things very fast without any trouble

Honestly, they are a full pack now. A few hours ago, they released Claude managed agents which lets you build long-running, autonomous agentic systems plus with their new suite of apis, engineering teams can harness Claude's exponential power with scalable infra out of the box. Absolute chill moment I mean… Show more

Inside the FORKOFF Audit Ledger Methodology

The audit ledger is the operational artifact that powers the 3-tier matrix in production. It is not a spreadsheet. It is a versioned append-only log that every Tier-2 and Tier-3 output writes to before, during, and after the review gate fires. The ledger answers four questions for every artifact: who drafted it (which agent or operator), what spec governed it (versioned hash), who reviewed it (named human plus timestamp), and what the diff looked like at approval time. That four-tuple is the verification proof.

FORKOFF runs the ledger as a thin layer on top of git. Every Tier-2 and Tier-3 output lives in a content branch, the spec lives in a sibling spec file with a SHA, and the review approval is a signed commit. When a regulator, a client, or an internal stakeholder later asks 'who signed off on this claim', the answer is a one-line git log query, not an inbox archaeology project. We adopted this pattern after running three client audits in 2025 where the failure mode was identical: the agency had done the review, but had no defensible record of WHO reviewed WHAT and WHEN. The work was real. The proof was missing.

The ledger has five required columns at minimum. Artifact ID (stable URL plus content hash). Tier assignment (1, 2, or 3, with the worst-case-cost calculation that justified the assignment). Spec version (SHA of the rule set in force at draft time). Reviewer identity (named human, never a role title, never a Slack handle that can be reassigned). Approval timestamp (UTC, ISO 8601, machine-parseable). Optional but heavily recommended: linked source-of-truth artifacts for every quantified claim in the output.

What the audit ledger surfaces, beyond legal defensibility, is drift. When the spec version on Tier-2 blog drafts has not been touched in 90 days but the rejection rate has climbed from 8 percent to 22 percent, the ledger flags it. The spec is stale. Either the agent capability has shifted under it, or the brand voice has drifted, or the category landscape has moved. The ledger does not solve the drift, but it surfaces it before the readers do. The clients who run our audit ledger reduce trust-decay incidents by roughly 70 percent versus clients who run the same agents without the ledger underneath. The agent capability is identical; the operating discipline around the agent is what changes the outcome.

How Trust Breaks When Tiers Get Confused

Vertical context changes which tier an agency actually delivers at. The vertical AI agency pricing case studies ship the per-industry retainer ranges that calibrate this audit against real client engagements.

The obvious failure is treating a Tier-3 output like Tier-1, shipping an unreviewed ad claim, or a case study the client never approved. Those are rare and usually caught. The subtle failure, and the one we see more often in FORKOFF audits, is treating Tier-2 outputs like Tier-1 for months on end. The first month looks great. The sixth month, your audience starts pattern-matching your content as 'AI-written', not because any single post was bad, but because the compounding 20% of Tier-2 outputs that slipped through without review trained readers to expect the rhythm of unsupervised agents.

This is the trust decay curve. Trust stays flat for roughly month 0-1 (agents nail 80% of tasks; nobody notices the 20% that drift). Month 2-4 the drift accumulates and readers pattern-match, the complaint shifts from 'this specific post is off' to 'their content just feels AI'. Month 5-6, if a Tier-3 output then goes public uninspected, trust doesn't erode, it collapses, in days. The recovery path is twelve to eighteen months of visibly human-reviewed content before the signal-to-noise ratio reads as 'real brand' again.

Why agent vendors themselves now publish verification artifacts

Anthropic shipped a public Claude Code post-mortem (52 HN upvotes, 2026-04-23) the same week OpenAI shipped Workspace Agents. The signal: vendors at the agent layer are now treating themselves as Tier-3 in their own stack, shipping structured incident reports, not marketing responses. If vendors run audit-trail verification on themselves, the application layer (your marketing ops) has no excuse not to. The vendor pattern becomes the customer pattern within two quarters.

Source: HN front page, 2026-04-23; FORKOFF client engagements

Agency-Claim Verification Gates: The Four Categories

Most marketing failures in the agent era are not output failures. They are claim failures, and claim failures fall into four predictable categories. Every FORKOFF Tier-3 output gets gated against all four before it ships.

Category 1: Quantified outcome claims. Any number that ties to a result. 'We grew their inbound by 4x.' 'CPV under one cent.' 'Saved 12 hours per week.' Every quantified outcome must link to a source-of-truth dashboard, a screenshot with timestamp, or a named client willing to repeat the claim on record. If the source has decayed (dashboard archived, client moved on, screenshot lost), the claim is retired from the active claim library and replaced with a current-period number. FORKOFF maintains a claim-library document per client with a freshness column; claims older than 180 days require re-verification before they can be cited in net-new outputs.

Category 2: Attribution claims. 'Our agent system did this.' 'Our process delivered that.' Attribution is harder than outcome verification because the counterfactual is invisible. The discipline here is to attribute only what we can show in the audit ledger, and to use 'contributed to' framing for outcomes where we cannot isolate our contribution from the rest of the operator's stack. Strong attribution: 'We rebuilt the Reddit reply system and tracked 51K monthly inbound from the system itself, ledgered as conversation-id-to-revenue trace.' Weak attribution dressed as strong: 'We grew their business by X' when their business grew for six other reasons too. The weak version is the one that gets retracted publicly.

Category 3: Comparative claims. 'Better than competitor X.' 'Faster than the industry average.' 'Lower-cost than the alternative.' These are the highest-risk Tier-3 claims because they invite legal response from the compared party. The verification gate here is brutal: the claim ships only if we hold a defensible apples-to-apples benchmark study, with methodology disclosed, sample sizes named, and dates of measurement attached. Anything softer becomes 'in our experience, X' framing, which is verifiable as a statement of opinion, not a falsifiable claim of fact. Compared parties cannot sue over an opinion. They can and do sue over a fact you cannot prove.

Category 4: Endorsement and quote claims. 'CEO of company Y said this.' Case studies. Testimonials. The gate is a signed approval from the named party, retained in the ledger, dated within 12 months of the output going live. Approval older than 12 months requires re-confirmation because companies change positioning, executives change roles, and a quote that was approved in 2024 may be embarrassing in 2026. FORKOFF runs an annual quote-refresh sweep across every client deliverable that contains a named endorsement. The sweep takes one operator two days and prevents the worst version of a Tier-3 retraction: 'we never said that' from a former champion.

The compounding insight is that the four categories are linearly verifiable. Category 1 needs a dashboard. Category 2 needs a ledger. Category 3 needs a benchmark study. Category 4 needs a signed approval. None of this is novel. The novel piece is RUNNING the four-gate check on every Tier-3 output, every time, with the agent-produced volume forcing the operator to systematize what used to be ad-hoc legal review.

What A Verification Spec Actually Looks Like

Verification specs slot into a broader retainer scope of work. The AI marketing agency retainer scope breakdown shows where the verification line item sits inside the 8-item FORKOFF default SOW (between attribution reporting and the outcome gate).

Verification is a tactic inside a broader positioning shift. The AI elevates thinking positioning analysis covers the meta-positioning move FORKOFF uses to flip AI from replacement-fear into elevation-of-thinking framing.

Specs fail in the same two ways. Too loose (just goals, no constraints) and the agent drifts. Too tight (no room for the agent to produce, just a template) and you may as well not use an agent. The useful middle is three lists per task type.

MUST-INCLUDE. Specific claims with source (e.g., 'must cite our qualified-views data if claiming CPV < $0.01', linking our qualified-views metric breakdown). Product names rendered exactly. Approved pricing. The current correct CTA URL. Nothing in this list is optional.

MUST-EXCLUDE. Forbidden claims (things your legal team has said no to). Competitor names. Off-brand phrases. Specific numbers you haven't sourced. If the output contains any of these strings, reject automatically, don't bother with human review.

DISQUALIFIERS. Structural failures. 'If the post mentions a client by name, reject until approval is linked.' 'If the ad makes a quantified ROI claim without a linked case study, reject.' 'If the draft exceeds 1,800 words without a framework, reject.' Disqualifiers turn vibes into automatic gates.

Every published FORKOFF post runs through this spec pattern before it ships. The 90-day $12K clipping case study is a Tier-3 output; its disqualifier list alone runs to 14 items. The Reddit intent engine writeup is Tier-2; 8 items. Right-sizing verification to tier is the entire game.

How to nail your ICP without a big budget or audience

Product Marketing Alliance

Michelle Zak (InCommon) on nailing your ICP without a big budget - the verification discipline that separates 3-tier audits from generic marketing.

Three Named Verification Case Studies From The FORKOFF Cohort

The framework reads cleanly in the abstract. The audit-ledger discipline is harder to internalize without seeing what each tier looks like under client load. Three cases from the 2026 FORKOFF Founder-Funnel Cohort show the matrix in operation.

Case 1: A Series A AI infra company shipping 40 Reddit replies per week. Tier-1 territory. The agent runs against a 14-rule spec covering brand voice, banned vocabulary, no-pricing claims, no competitor mentions, and a hard cap on self-promotional links per thread. The review gate is zero pre-ship. The verification work happens AFTER ship: a weekly batch sample of 20 percent of outputs gets human read-through, with rejections feeding back into the spec. Over 11 weeks of operation, the spec went through 6 revisions, the rejection rate stabilized at 3 percent, and the inbound DM volume from Reddit attribution rose from 8 per week to 41 per week. The Tier-1 designation held because every individual reply was reversible (delete + apology). The system-level guard came from the batch sample, not pre-ship review.

Case 2: A founder-led developer-tools brand shipping 3 blog spokes per week. Tier-2 territory. Each draft runs against a versioned MUST-INCLUDE / MUST-EXCLUDE / DISQUALIFIER spec, then routes to the founder for a 90-second accept-or-revise read. The first month, the founder spent 45 minutes per week on review. By month three, the spec was tight enough that the founder spent 12 minutes per week on the same volume, and the rejection rate had dropped from 31 percent (month 1) to 9 percent (month 3). The spec evolution itself is the moat. Tier-2 is not 'human reviews every draft.' Tier-2 is 'human teaches the spec to the point that drafts arrive accept-ready.' The verification overhead asymptotes to near-zero on a well-tuned spec, but only because the early-month investment was real.

Case 3: A YC-backed AI agency shipping 4 client case studies per quarter. Tier-3 territory. Every case study runs through the four-category claim-verification gate (quantified, attribution, comparative, endorsement). Then a named reviewer signs the audit-ledger commit. Then the client champion countersigns via email, retained in the ledger as an attached PDF. Then the output ships. The discipline added roughly 6 hours per case study (3 hours on claim verification, 2 hours on legal-style review, 1 hour on ledger and approval workflow). Across 16 case studies shipped under this discipline, zero claims have been challenged, zero quotes have been retracted, and the case-study library has become the primary inbound driver for the agency's outbound pipeline. The 6 hours per case study is not overhead. It is the moat.

The cross-case pattern is the one that surprises operators every time. Tier-1 looks like the lowest-cost lane but requires the most spec-engineering work; the agent runs unsupervised, so the spec carries every guarantee. Tier-3 looks most expensive but the per-output cost stabilizes once the operator builds the ledger habit; the marginal Tier-3 output costs roughly the same as the marginal Tier-2 output once the four-gate workflow is internalized. The expensive tier is whichever one the operator has not yet systematized.

When Verification Overhead Is Not Worth It

Three honest disqualifiers. If any apply, tighten verification later, don't skip it, but don't spend cycles on it this quarter.

- You have under 10 outputs per week of any tier. The spec-writing + review-process overhead exceeds the efficiency gains. Run Tier-2 manual review on everything for the first 4 weeks, collect the patterns, then graduate the tier matrix. Premature formalization slows shipping without improving outcomes.

- Your team is one person. With no second reviewer, Tier-3 paper trails add process without adding a control. Self-review catches ~30% of agent-drift issues; two-person review catches ~85%. Until you have a second set of eyes, keep Tier-3 outputs on a quarterly human-only cadence (one person writes, one hour-delay, same person re-reads with fresh eyes, imperfect but the honest best option).

- Your category has zero regulatory surface. If you're in a category with no legal exposure (B2B2B infra tools, internal tooling for technical buyers), Tier-3 collapses into Tier-2. You still want the paper trail for client-facing case studies, but ad-claim audits become optional overhead.

How To Run The 3-Tier Audit On Your Existing Marketing Stack

If you already have an agent-native marketing stack in production, the migration to a 3-tier audit is a four-week project, not a re-platform. Most operators try to formalize the matrix in a single weekend, which is the most common failure mode FORKOFF audits surface. The four-week plan below is the sequence we run for new retainer clients in the founder-growth pillar.

Week 1: Output inventory. List every recurring marketing output your stack produces. Blog posts, cold emails, LinkedIn replies, Reddit threads, Twitter posts, case studies, ads, pricing-page edits, sales-call summaries, partnership announcements. For each, capture three numbers: typical weekly volume, average review time per output today, and worst-case reversibility (in dollars, in days-to-repair, in named stakeholders affected). The inventory is the input to the tier assignment, not an opinion.

Week 2: Tier assignment plus spec drafting. For each output type, assign the tier using the worst-case-reversibility number from Week 1. Anything reversible inside 24 hours with zero stakeholder cost is Tier-1 candidate. Anything reversible inside a week with operator-only cost is Tier-2. Anything that costs a named stakeholder relationship or invites regulatory attention is Tier-3. Then draft the MUST-INCLUDE, MUST-EXCLUDE, and DISQUALIFIER lists per output type. Do not skip this. The lists ARE the spec.

Week 3: Ledger and review-workflow build. Stand up the audit ledger as a versioned content branch system, even if you start with a shared spreadsheet. Pick a named reviewer per output type. Set the SLA. For Tier-2 outputs, the SLA is typically a 2-hour same-business-day review window; for Tier-3, a 24-hour structured-review window. Build the rejection-feedback loop early: every rejected draft triggers a spec revision proposal, which the operator approves or declines weekly. Without the feedback loop, spec quality stays flat and rejection rates never improve.

Week 4: Soft launch plus measurement. Run the matrix for a full week on real outputs. Track three metrics: rejection rate per tier, time-to-review per tier, and operator-flagged drift incidents (any case where the spec missed a pattern the human caught). Hold a 30-minute retro at the end of the week. Tighten specs where drift was caught, loosen where rejection rates exceed 25 percent (a sign the spec is over-constrained for the agent capability).

After four weeks, the matrix is in production. Months 2 through 6 are spec-tuning, not framework changes. Operators who try to evolve the framework week-over-week typically over-correct after one bad output. The discipline is to evolve the SPEC, not the FRAMEWORK. The framework is stable. The spec is living.

The FORKOFF Verification Philosophy: Why This Beats The Default

The default operating mode for most agent-native marketing stacks in 2026 is binary. Either 'the agent ships everything' (which works for 11 weeks and then collapses) or 'a human reviews everything' (which negates the agent's volume advantage). The 3-tier matrix is the lever that lets you have both volume AND defensibility, by assigning the right gate to the right output.

The reason this beats the default has two parts. First, operators systematically underestimate the worst-case cost of Tier-3 outputs, because the worst case is rare. The 99th-percentile event (a public retraction, a regulatory letter, a lost client) is the one the verification system is paid to prevent, and pricing the verification work against the AVERAGE-case cost always underweights the discipline. The audit ledger is insurance, and insurance is correctly priced against the tail, not the median.

Second, operators systematically OVER-estimate the cost of Tier-1 verification because they have never tried it. Tier-1 outputs do not need pre-ship review; they need POST-ship batch sampling, which costs roughly one hour per week even at high agent volume. The reason batch sampling works is that Tier-1 outputs are reversible by definition. A bad reply gets deleted; a bad DM gets apologized for. The system-level discipline of sampling 20 percent of outputs and feeding the patterns back into the spec is the loop that keeps Tier-1 trustworthy at scale.

The third, less-discussed reason this beats the default is the operator scaling story. A founder running marketing for their own company can hold the matrix in their head for the first six months. Once the company starts hiring marketing operators, the matrix becomes the onboarding document. New hires learn 'we run Tier-1 like this, Tier-2 like this, Tier-3 like this' in a day, where without the framework they would learn it over six months of small mistakes. FORKOFF has onboarded marketing operators into this framework who reach full productivity in week 2 of the retainer; without the framework, the same operators historically took 8 to 10 weeks. The matrix is not just a quality system. It is a hiring and training system.

This is why agent-native marketing in 2026 is not the agent. It is the agent plus the verification system. Founders who run the matrix compound. Founders who skip it ship fast for 11 weeks and then spend the next year apologizing. The audit ledger records the verification gate per output, the gate disposition, and the operator who countersigned, so the system carries an evidence trail rather than a vibe.

Five Verification Anti-Patterns FORKOFF Audits Surface

The 3-tier matrix breaks in predictable ways. Five anti-patterns show up in nearly every FORKOFF audit of an agent-native marketing stack that was built without verification discipline.

Anti-pattern 1: Tier drift toward Tier-1. Outputs that were originally classified Tier-2 quietly migrate to Tier-1 because the operator gets busy and the review queue backs up. The agent keeps shipping; the operator stops reading. By month four, the official tier classification says Tier-2, but the lived practice is Tier-1, and the operator does not realize it until a Tier-3 miss surfaces. The fix: a weekly review-rate dashboard that pages the operator when actual review rate drops below 95 percent for any Tier-2 output type.

Anti-pattern 2: Tier inflation toward Tier-3. The opposite failure. After one bad output, the operator over-corrects and reclassifies everything as Tier-3. Review costs balloon; agent volume drops; the operating discipline becomes the bottleneck. The fix: hold a post-incident retro that asks 'what was the worst-case cost of THIS specific failure, and what tier matches that cost' rather than reclassifying every output type by reflex.

Anti-pattern 3: Spec versioning without spec retiring. Operators keep adding rules to the spec but never remove obsolete ones. Specs accumulate to 60+ rules across 18 months, and the agent ends up with so many constraints that creative output collapses into safe boilerplate. The fix: quarterly spec retirement, where any rule that has fired zero rejections in 90 days gets a hard look and is either retired or merged into a broader rule.

Anti-pattern 4: Reviewer identity rotation without ledger update. The named reviewer changes (someone leaves, someone gets promoted, someone shifts pillars) and the ledger still lists the previous name. Six months later, when a regulator or client asks for the approver, the approver does not work there anymore. The fix: a monthly ledger-identity audit that confirms every reviewer name on file is still active and still owns the output type.

Anti-pattern 5: Treating the framework as the artifact. Operators publish the 3-tier matrix on Notion, congratulate themselves, and never operationalize the audit ledger. The framework without the ledger is a poster; the ledger without the framework is a chore. Both are required. Both compound.

The Bottom Line

The 3-Tier Verification Matrix is the lever that makes agent-native GTM sustainable past month six. Tier 1 outputs ship direct because worst-case is reversible. Tier 2 outputs draft + accept because the accumulating 20% failure would otherwise erode brand voice. Tier 3 outputs get a paper trail because the worst-case cost is measured in quarters of lost trust, not dollars of lost spend.

Most AI marketing failures in 2026 won't be about models being wrong. They'll be about operators applying uniform review gates, either too loose on Tier 3, or too tight on Tier 1. The unfair advantage isn't the best agent. It's the tier-accurate verification system underneath it.

The audit-ledger snapshot FORKOFF runs on every new agent-native engagement starts with a one-page tier inventory. Across the 31 audits FORKOFF logged in Q1 and Q2 2026, the median operator entered the engagement with 73 percent of AI outputs classified as Tier 1 (ship direct), 21 percent as Tier 2 (draft + accept), and 6 percent as Tier 3 (paper trail). After the tier-accurate audit reclassification, the median operator exited at 38 percent Tier 1, 47 percent Tier 2, and 15 percent Tier 3, which is the distribution that maps to sustained-trust output across the 14-month attribution window. The single largest reclassification volume happens at the Tier 1 to Tier 2 boundary: outputs that look transactional (a routine LinkedIn comment, a stock cold-email opener, a metrics dashboard alert) but accumulate brand-voice drift over hundreds of repetitions. Operators consistently misclassify these because the per-output stakes feel low. The audit reframes the boundary at "does this output's failure mode show up in aggregate brand perception inside 90 days." If yes, it routes to Tier 2 regardless of individual stakes. The reframe alone closes 60 to 70 percent of the brand-voice drift that operators flag at month 4 to 6 of an agent-native build. The full reclassification rubric and the 31-audit anonymized comparison set lives at forkoff-audit/_ledger/verification-tier-2026-Q2.md and is rerun on every new engagement intake.

Related FORKOFF reads: agent-native GTM stack, AI DevRel playbook, Founder Funnel OS, VC Portfolio GTM, Agent-Ready Site Audit. References: Reddit, LinkedIn.

Further reading: the YC library.

For the full picture, see the founder-led growth playbook.

For deeper cross-pillar context, see the clipping operations that survive Tier-3 verification. The tier-accurate audit pattern keeps every claim falsifiable, every output reproducible, and every spend gated against a measurable outcome. Operators who run the tier-accurate audit on every new agency engagement before the contract starts surface mismatched assumptions in the first 30 minutes, not the first 30 days. That single change in sequencing accounts for the largest gap between the FORKOFF audit cohort win rate and the median agency renewal rate.