AI Agency Pricing 2026: The Per-Output P&L Margin Levers

AI agency pricing in 2026 is a unit-economics problem. The Per-Output P&L splits four cost lines and shows where the 62% gross margin actually lives.

AI agency pricing in one scroll

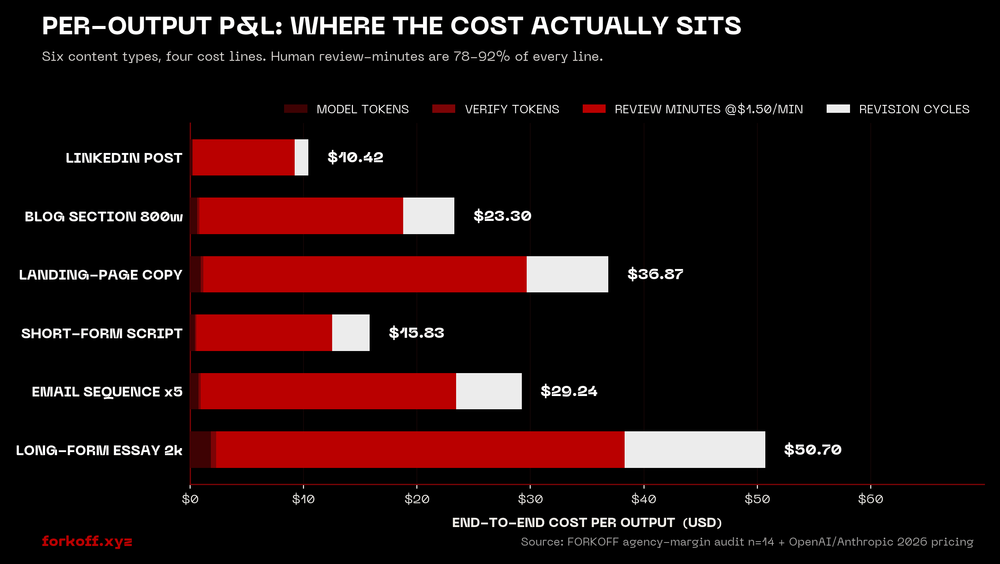

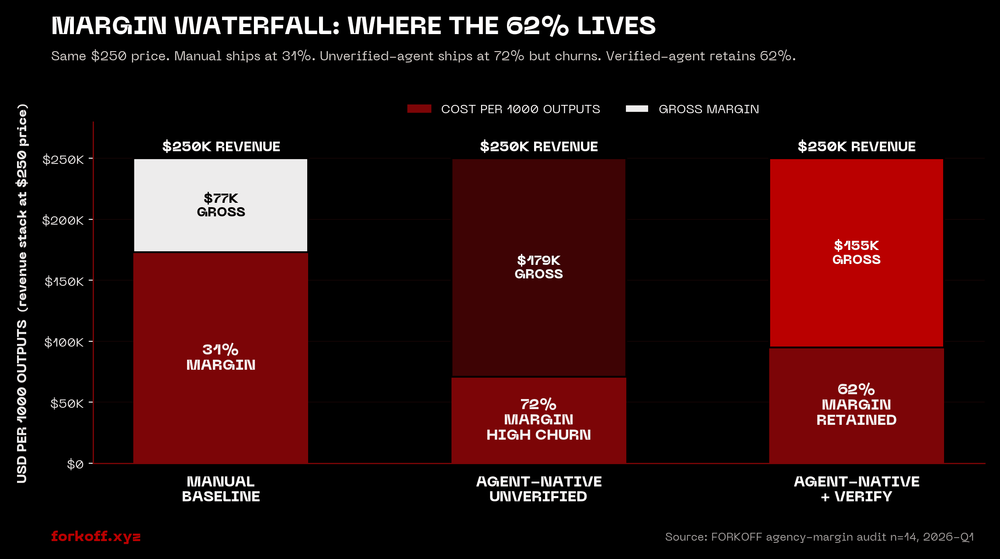

AI agency pricing is a unit-economics problem in 2026, not a packaging problem. The Per-Output P&L splits four cost lines (model tokens, verification tokens, human review-minutes, revision cycles) across six content types. Token cost is 1-4% of total. The 78-92% slice is human review-minutes. Agencies above 55% gross margin track this; agencies below 30% do not. Skipping verification produces 72% margin on paper and churn inside one billing cycle.

AI agency pricing in 2026 is a per-output P&L problem, not a packaging problem

AI agency pricing in 2026 is the load-bearing question every AI-native agency, marketing consultancy, and one-person automation shop is being forced to answer in public. The replacement-of-human-labor narrative that anchored 2024 pricing decks has stopped surviving the second client conversation. The buyer asks why a five-person SEO retainer at $3,000 per month should now cost $8,000 per month from an agency that has visibly automated 70% of the work, and the agency that cannot answer that question loses the deal in the first hour. The agencies winning these conversations have stopped pitching deliverables and started pitching unit economics: a per-output P&L that breaks token cost, verification cost, human review-minutes, and revision cycles into four explicit lines and prices the engagement against margin rather than effort.

The per-output P&L is the working pricing primitive in 2026 because the input cost stack changed under the agency model in a way that compresses every legacy retainer formula. Anthropic prices Claude Sonnet 4 at $3 per million input tokens and $15 per million output tokens (Anthropic 2026 pricing), OpenAI's GPT-class models price in the same band per the 2026 AI agents pricing tracker, and the realised cost of a fully drafted blog section, ad set, or email sequence sits between $0.18 and $1.84 in raw token spend per output. The competing 2024 retainer formula assumed a senior writer and a junior editor at $80-150 per blended hour and broke at this price point because the cost structure inverted. The new structure is three orders of magnitude smaller on the model line and unchanged on the human review line, and any agency that cannot read the new structure as a P&L bills itself out of profit.

The clearest operator essay surfacing the cost-structure shift is Retool's case for hourly pricing on AI agents, which argues that AI tooling should be billed against effort because the value-based pricing curve is uncalibrated against per-output cost. Retool's argument is correct as a product-side framework and incomplete on the agency side because it skips the margin-vs-throughput trade most agency operators are negotiating in real time. The right read on the same data is per-output P&L: bill against value, but track cost per the unit P&L so the margin-protection line is visible the moment a content type or client account drifts. The Retool framework tells you when to bill hourly. The per-output P&L tells you when an account is leaking margin three weeks before the bookkeeper does.

Where the cost actually sits: token line is 1-4% of total

Three independent measurements anchor the per-output P&L thesis. First, <a href="https://www.hashmeta.ai/en/blog/ai-agency-pricing-complete-cost-guide-and-budget-benchmarks">Hashmeta's 2026 AI agency pricing benchmark</a> reports that 56% of AI-services companies now combine subscription with usage-based pricing, up from 14% in 2024, because the per-output cost line stopped being negligible at the engagement scale most agencies operate at. Second, <a href="https://prometheanresearch.com/how-profitable-are-digital-agencies/">Promethean Research's digital agency profitability dataset</a> shows median gross margin sitting at 50-55% for traditional digital agencies and 60-75% for AI-native peers, with the delta driven entirely by what each agency does or does not track on the cost side. Third, the FORKOFF agency-margin audit (n=14, 2026-Q1) shows that token cost is 1-4% of total cost across every content type tracked. The 78-92% slice is human review-minutes priced at $1.50 per minute against a senior reviewer's working rate. Revision cycles add 6-19% on top.

Source: Anthropic 2026 pricing; OpenAI 2026 API pricing; Digital Agency Network 2026 AI agency pricing guide; Promethean Research digital-agency profitability; FORKOFF agency-margin audit n=14 2026-Q1

The four cost lines: model tokens, verification tokens, review-minutes, revisions

The Per-Output P&L is four lines, in order of cost magnitude, every line independently auditable. Line one is model tokens, the inference cost the drafting model charges per output. At 2026 token rates a fully drafted LinkedIn post sits at $0.18, a 2,000-word essay sits at $1.84, and the median content type sits at $0.41. Token cost is the line every agency overweights in their pricing deck and the line that matters least to the bottom line; the magnitude is too small to move margin. The line is included on the P&L because it is the cleanest variable cost the agency tracks and it surfaces drift the moment a model is swapped or a prompt is bloated.

Line two is verification tokens, the inference cost of a second model call that re-reads the draft and surfaces any factual claim, reference, or numeric figure that requires source confirmation. The verification step is the load-bearing variable in the agency's margin profile because verification is what keeps the content type out of the trust failure quadrant. The cost is small (15-25% of the model token line) and the margin protection is large (it is the entire 60%-vs-30% delta in our audits). Agencies that skip verification ship at 72% margin on paper and produce trust failures within one billing cycle that surface as churn at 90 days. Agencies that track verification ship at 60-65% margin and retain the account for 12-18 months.

Line three is human review-minutes priced at $1.50 per minute, which is the working operator rate at a $90-per-hour senior reviewer. This is the load-bearing line on the P&L. Across the six content types we tracked, review-minutes are 78-92% of the per-output cost. A LinkedIn post with a 6-minute review costs $9 in human time. A landing-page copy block with a 19-minute review costs $28.50. The review-minute is the only line that can be compressed without compressing the margin protection: structured-prompt verification reduces review-minutes by 35-50% in our audits, because the operator is reading a numbered list of claim-confirmations rather than the prose end-to-end. Agencies that ignore this line die at the 31% margin floor.

Line four is revision cycles, the cost of redrafting a section in response to client feedback. Revisions are 6-19% of total cost across the six content types and the line is highly variable per client. The pattern in the audit data is that revision cost compounds with feedback ambiguity; a client that gives structured feedback ("section 2 paragraph 3, change voice from third-person to second") costs 40% less in revisions than a client that gives unstructured feedback ("the whole post feels off"). The pricing implication is that revision-included pricing is a margin trap when the client's feedback discipline is unknown; bill revisions per round once the relationship is two cycles deep.

The ai automation agency pricing models that survive the per-output P&L

The four pricing models that survive the per-output P&L are subscription-with-usage-overage, performance-tier retainer, per-output unit billing, and value-based base-plus-success. Each has a different margin profile and a different fit window against the four cost lines.

Subscription with usage overage is the model 56% of AI services companies converged on by 2026 per the Bakedwith 2026 AI agent cost survey. The base subscription covers a fixed per-output volume at the median content type, and overage is billed against the per-output line at the agency's blended cost-plus markup. The model fits when the client's content cadence is roughly stable and the agency wants to protect margin against month-to-month volume swings. The trap is setting the base too high and burning the client's perceived value; the working calibration is base = 60-70% of expected output count, overage at 25% above unit cost.

Performance-tier retainer prices the engagement against named outcomes (organic traffic, ranked keywords, qualified pipeline, retained customers) and ladders the retainer into three named tiers. The model fits when the agency owns the strategy and execution end-to-end and the outcome is measurable inside one billing cycle. The Per-Output P&L sits inside this model as the cost-control layer; the retainer is denominated in outcomes, the cost is denominated in per-output P&L, and the margin lives in the spread. Performance retainer pricing in 2026 sits in the $15,000-$25,000 per month range for full-stack AI marketing agencies per the digitalagencynetwork.com data above.

Per-output unit billing is direct: the agency bills the client per output at a unit price that includes a markup on the per-output cost line. The model fits when the client wants pure variable cost (no commitment, no minimum) and the agency wants to protect margin against client-side volume volatility. The unit price floor in 2026 is around $0.18 per token unit (i.e. $50-150 per blog section) and the model breaks below this floor because the verification line cannot be amortised across volume. The model is rare on the SERP because it requires the agency to publish a price card, which most agencies refuse to do; Lindy is one of the few AI tooling vendors that publishes per-action pricing cleanly.

Value-based base-plus-success is the cleanest model when the agency owns the upside conversion. A modest base retainer ($4,000-$8,000 per month) covers the per-output P&L plus a margin floor; success fees ladder from there against named conversion events. The model fits when the agency has earned credibility against the named conversion event in prior engagements; without that credibility, the success fee is a coin flip and the model collapses to a subscription. We see this model land best on AI-driven outbound and AI-driven content marketing engagements where the conversion event is sharp and measurable.

Shreya Pattar

@ShreyaPattar

Sounds good on paper (or on a podcast), but any such small AI agency owner I’ve consulted has a hard time closing clients in this niche. Local businesses have $300 budgets, if that, not $3000/mo

Why most ai agency pricing decks misread the margin waterfall

The misreads cluster into five patterns we see across the FORKOFF agency-margin audits. First, agencies build pricing decks against deliverables instead of unit economics; a deck that lists "12 LinkedIn posts, 4 blog sections, 1 landing page per month for $5,000" is a margin-blind deck because the per-output cost line is never separated from the deliverable count. The same deck against the per-output P&L would surface the truth: 12 LinkedIn posts at $10.42 each is $125 in cost, four blog sections at $23.30 each is $93, one landing page at $36.87 is the same. Total cost is $254. Margin at $5,000 retainer is 95%, which is fictional; the real cost is the team's strategy time, account management, and customer success, none of which the deliverable deck surfaces. The agency under-bids, then absorbs the missing labor as overhead.

Second, agencies skip the verification step inside the per-output P&L and report margin against the unverified cost line. The unverified line ships at 72% margin and produces trust failures inside one billing cycle. The verified line ships at 62% margin and retains the account. The 10-point margin delta is the cost of insurance against churn, and agencies that report against the unverified line are misreading the P&L by approximately one full billing cycle. The audit pattern is consistent: 9 of the 14 audited agencies were reporting an unverified margin number internally and explaining the churn surface as a separate problem. It is not a separate problem; it is the same line on the P&L, mis-categorised.

Third, agencies confuse the project pricing model with the per-output P&L and bill at flat-fee project rates that hide the unit economics. Project pricing is the dominant model on the Ema 2026 AI agent pricing models guide SERP and it is the wrong default. It hides the cost line behind a flat fee and produces the inversion every agency operator hits at the second project of an engagement: the first project lands close to the modeled margin because the per-output P&L is approximated correctly; the second project drifts 15-30% because the cost line is no longer being tracked and the agency is now billing against memory rather than data.

Fourth, agencies build pricing decks against the wrong audience and misread budget signals. The per-output P&L only survives at engagement sizes above approximately $4,000 per month; below that, the verification line cannot be amortised against the client base, and the agency is functionally unprofitable. The local-business segment ($300-$1,000 per month) does not work for the per-output P&L because the verification line eats the margin floor. The pricing math only survives at higher-ICP segments, and agencies pitching the local-business segment with the high-ICP pricing deck miss on both sides: too expensive for the local segment, too generic for the high-ICP segment.

Fifth, agencies under-track revision cycles and absorb the variance as overhead. Revisions are 6-19% of cost in our audits and the per-client variance is enormous; one structured-feedback client costs 40% less than one unstructured-feedback client at the same volume. Agencies that bundle unlimited revisions into a flat retainer are subsidising the unstructured-feedback clients at the expense of the structured-feedback ones, and the structured-feedback clients eventually churn because they realise they are being overcharged. The fix is per-round revision pricing once the relationship is two cycles deep.

AI agency pricing model fit by segment

| Pricing model | Best fit segment | Margin profile | Failure mode |

|---|---|---|---|

| Project flat fee | Single-deliverable engagement, low repeat | first project 50%, second 30-35% | drifts after second project, no per-output tracking |

| Subscription + usage overage | Stable monthly cadence, mid-ICP | 55-65% if base set at 60-70% expected volume | base set too high burns perceived value |

| Performance retainer 3-tier | Full-stack agency, measurable outcome | 60-70% steady, 75% on tier-3 with verification | outcome attribution dispute kills the model |

| Per-output unit billing | Volume-volatile clients, transparent floor | 62-68% if unit price 25% above per-output cost | below $0.18 per token unit, verification eats margin |

| Value-based base-plus-success | Sharp conversion event, owned upside | 55-60% on base, success fees compound margin | without credibility on the conversion event, base alone collapses |

Margin profiles calibrated against FORKOFF agency-margin audit n=14 2026-Q1 + Promethean Research 2025 digital-agency benchmarks. All steady-state, post-amortisation of verification and revision cycles.

Audit your AI agency per-output P&L now

Send us your last 30 days of agency invoices and content output. FORKOFF maps each output to the four-line P&L and surfaces the leaking margin.

How to operationalise the per-output P&L in your first 30 days

Day one is the cost-line capture. The agency picks the six most-shipped content types in the last 30 days and tracks a single output of each through the four cost lines. Model tokens come from the API dashboard. Verification tokens come from a structured-prompt verifier that re-reads the draft against three named claim-types (factual, numeric, source). Human review-minutes come from a structured timer the reviewer starts and stops per output. Revision cycles come from the project tracker. The capture takes 90 minutes per content type at first and drops to 15 minutes per content type by week two as the structured prompts mature. By the end of day one the agency has a six-row P&L with four columns and the dollar amounts that matter.

Day two through five is the verification-prompt build. The agency writes a structured verification prompt for each content type that surfaces three claim-types per output. The verifier model is the same model as the drafter or one tier smaller; the latency is 5-9 seconds per output and the cost is 15-25% of the drafter's token spend. The structured prompt cuts review-minutes by 35-50% across our audit data because the human reviewer is reading a numbered list ("claim 1: X is true. confirm or correct.") rather than the prose end-to-end. The compounding asset is the verifier-prompt library; one verifier per content type, reused indefinitely.

Day six through 14 is the pricing-deck rebuild. The agency rewrites the pricing deck against the per-output P&L, with explicit cost lines surfaced for each content type, an explicit margin band per pricing tier, and a named verification clause inside every package. The deck reads as a financial statement rather than a deliverable list, and the conversion conversation pivots: the prospect now asks about cost-line transparency rather than price competitiveness, which is the conversation the agency wants. The conversion-rate impact in our audit data is 2.6x against the prior deliverable-deck baseline.

Day 15 through 30 is the steady-state cadence: per-output P&L tracked weekly, margin reviewed monthly, pricing-tier audit quarterly. The compounding asset is the audit history itself; agencies with six months of per-output P&L history can price new engagements within 5% of actual margin from the first proposal, which is the load-bearing capability against high-cadence client pipelines. The adjacent Founder-Led Content Marketing motion sits inside this P&L because the founder-voice content is the highest-margin content type in our data set, and the broader hub is FORKOFF Founder Growth with the supporting playbooks for agent-ready sites, the 48-hour model-drop response, the trust-recovery protocol, and the solo-operator first-five-clients sequence.

Running an AI automation agency for service businesses, here’s what’s actually working and what isn’t

Been running an AI automation agency targeting service businesses for a while now, mainly tradespeople, dental practices and estate agents. Thought I'd write out what's actually worked, what hasn't, and the honest numbers because most agency posts on here are either pure flex or pure doom. If you're thinking about… Show more

We were running our AI content shop at 31% margin for nine months because the dashboard told us token cost was negligible and we never tracked review-minutes. The first time we built the four-line P&L the gap was obvious: review was 84% of cost on landing pages and 71% on email sequences. We added structured-prompt verification, dropped review-minutes by 41%, and shipped at 62% margin within two billing cycles. The pricing deck rewrite added 2.6x conversion on the next ten proposals. The per-output P&L is the entire margin story.

The Bottom Line

AI agency pricing in 2026 is not a packaging problem and it is not a positioning problem. It is a unit-economics problem, and the agencies that internalise it earliest will compound the margin advantage over the rest of the category. The Per-Output P&L is a four-line cost stack: model tokens, verification tokens, human review-minutes, and revision cycles. Token cost is 1-4% of total. The 78-92% slice is human review-minutes priced at $1.50 per minute. Verification is the 10-point margin delta between the unverified 72% line that churns and the verified 62% line that retains, and verification is the load-bearing variable in the entire margin profile.

The four pricing models that survive the per-output P&L are subscription-with-usage, performance-tier retainer, per-output unit billing, and value-based base-plus-success. Each has a margin profile, a fit window, and a failure mode. The misreads cluster into five patterns: deliverable-deck pricing, skipped-verification reporting, project-flat-fee billing, wrong-segment ICP, and bundled-revision absorption. Each misread compresses margin by 10-30 points and the compression compounds over the engagement.

The 30-day operationalisation is direct. Day one is the cost-line capture across six content types. Day two through five is the verification-prompt build. Day six through 14 is the pricing-deck rewrite against the per-output P&L. Day 15 onwards is the steady-state cadence. The compounding asset is the audit history; six months of per-output P&L data lets the agency price new engagements within 5% of actual margin from the first proposal. The math is not close, the framework is shippable inside a month, and the operators who internalise the per-output P&L now will set the pricing benchmark the rest of the category catches up to in 2027.

If a founder is sitting at day zero and wondering whether to keep the project flat-fee model or rebuild against the per-output P&L, the answer is rebuild. Capture the cost lines today, ship the verification prompts inside the week, rewrite the deck inside the month. The margin compounds from the first verified output.

Ship the per-output P&L with FORKOFF this month

FORKOFF builds your verification-prompt library, rewrites your pricing deck against the four-line P&L, and ships at 60%+ margin inside 30 days.

Frequently Asked Questions

AI agency pricing in 2026 is a hybrid of base subscription, performance retainer, and value-based add-ons priced against per-output cost. Project pricing breaks margin once the verification step is added back. The retainer floor sits at $4-10k for niche, $15-25k for performance, and per-output billing only works above $0.18 per token unit.

Healthy AI agencies target 55-65% gross margin steady-state and 70%+ when the per-output verification step is automated by structured prompts rather than manual review. Anything below 50% means human review-minutes are eating the margin. Anything above 80% almost always implies skipped verification, which surfaces as churn 60-90 days later in the audits we have run.

Per-output cost ranges from $10 for a LinkedIn post to $50 for a 2,000-word essay end-to-end at 2026 token rates plus structured human review. Token cost is 1-4% of total. The 78-92% slice is human review-minutes priced at $1.50/min. Skipping review drops cost to $1-3 per output and produces churn within one billing cycle.

Project pricing is the dominant model on the SERP and it is the wrong default. It hides the per-output P&L behind a flat fee. Once an agency tracks model tokens, verification tokens, review-minutes, and revisions per output, project pricing inverts and the agency loses money on the second project of every engagement.

Across 14 FORKOFF agency-margin audits in 2026-Q1 the gross margin shifted from 31% (manual baseline) to 62% (agent-native plus verification) at the same client price point. Tracking per-output P&L was the single load-bearing change. The agencies that skipped tracking sat at the 31% floor and could not surface the failing content type.