The 6 Production Agents FORKOFF Runs for Distribution Ops 2026

FORKOFF runs 6 named production agents in daily distribution ops. The stack: jk-creator, viral-radar, outreach-radar, signal_detector, validate-prospects, fi...

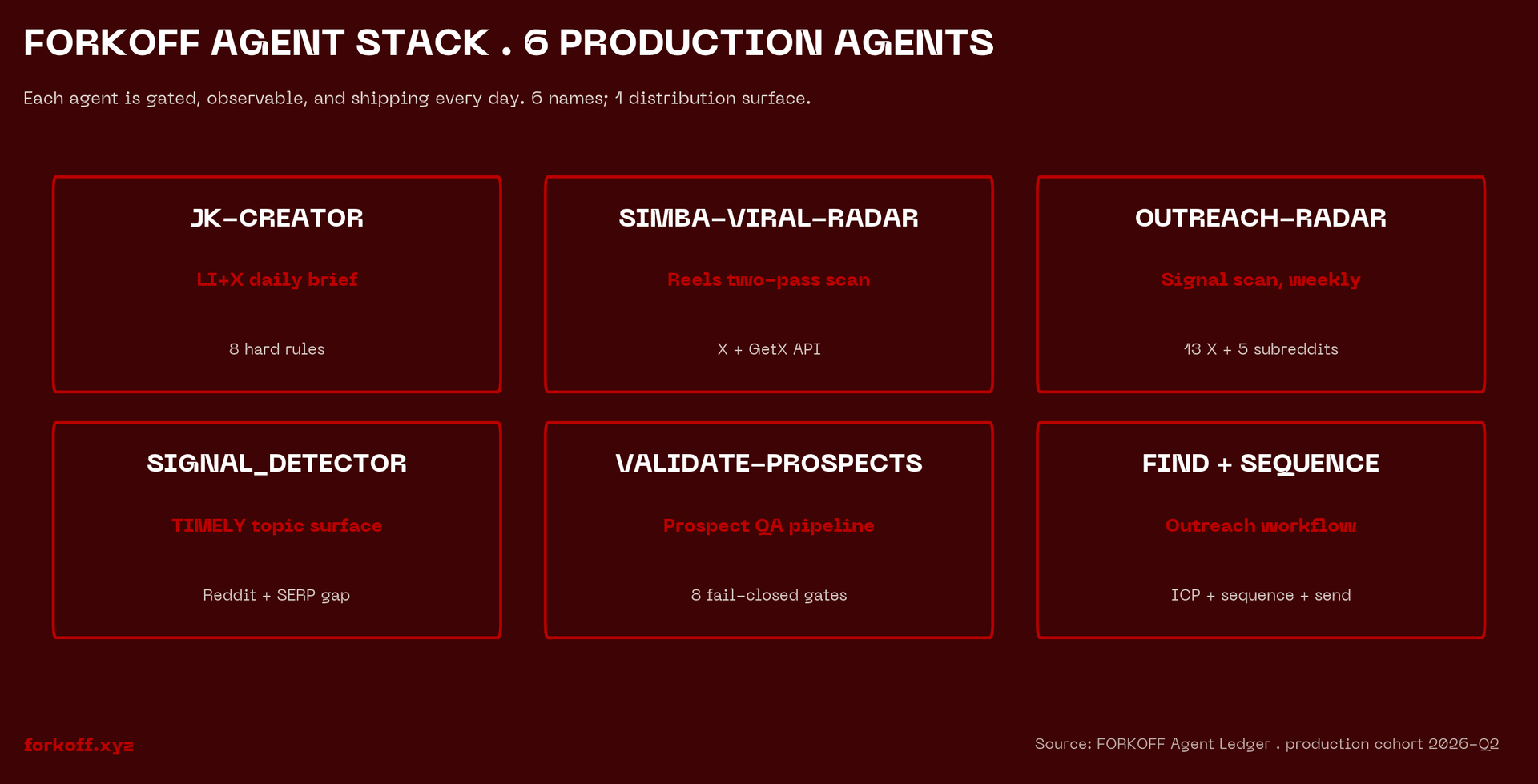

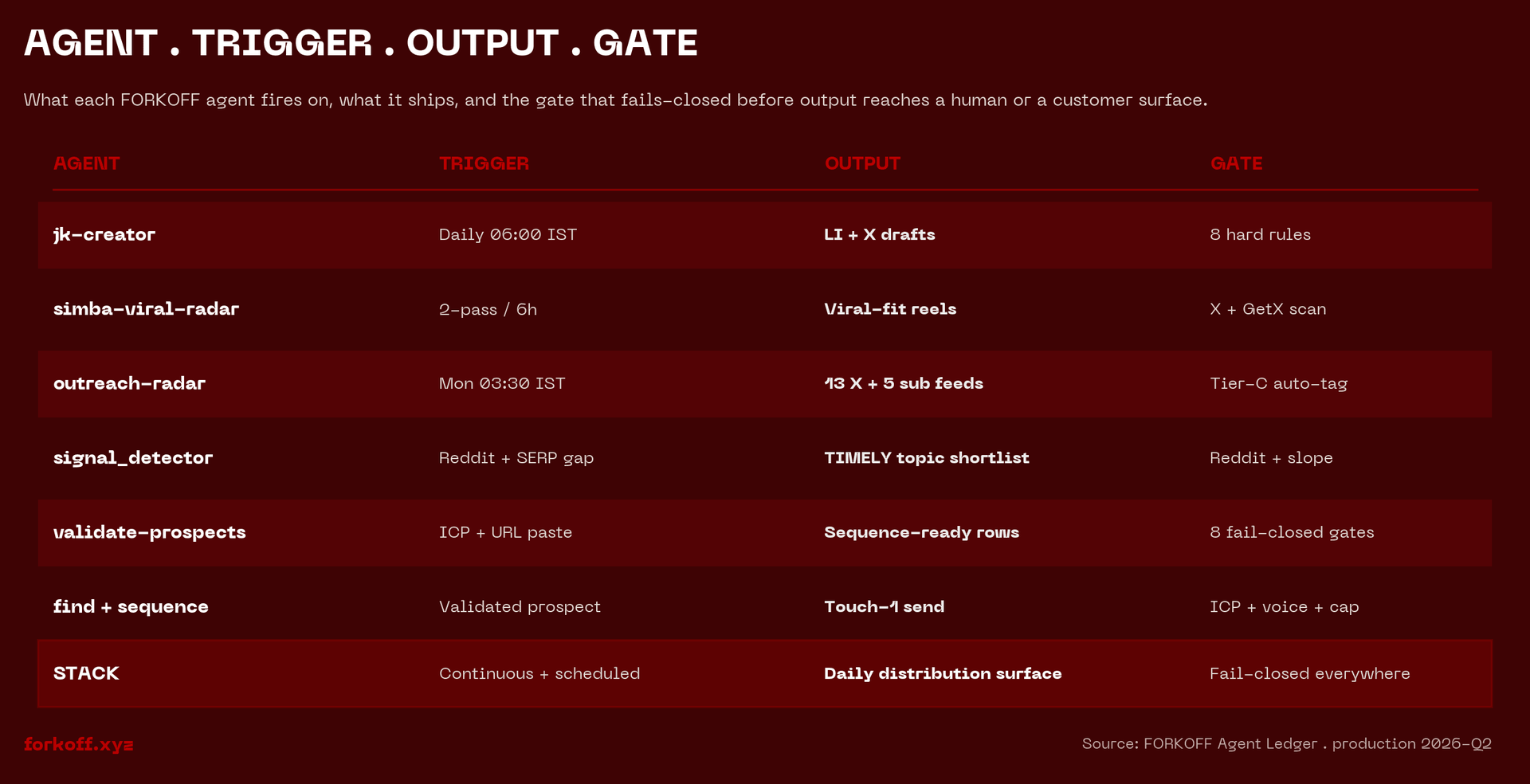

The 6 production agents FORKOFF runs in one scroll

FORKOFF runs six named agents in daily distribution ops as of Q2 2026. jk-creator ships JK's daily LinkedIn and Twitter brief gated against 8 hard rules. simba-viral-radar runs two-pass scans for Simba's reels. outreach-radar runs a launchd Mon 03:30 IST scan across 13 X handles and 5 subreddits with auto Tier-C tagging. signal_detector surfaces TIMELY blog topics from Reddit and SERP gaps. validate-prospects runs an 8-gate fail-closed prospect pipeline with Mode A and Mode B for LinkedIn-only or email-first inputs. find-prospect plus create-sequence bracket validate as the outreach workflow pair. Each agent has a triggered run, typed output, captured failure, and a FORKOFF datapoint we cite in client work.

The thread that crystallized the gap

A staff engineer dropped a long thread on r/AI_Agents in April 2026 titled "How to build production Agents - Part 1." It cleared 144 upvotes and 26 comments inside a day. The post laid out architecture diagrams, planner-executor patterns, and observability stacks. The comment section asked the same question over and over: "great write-up, but where is the running code?"

That gap is the entire 2026 ai marketing agents production stack story. Engineers theorize. Vendors ship slideware. The number of agencies that actually run named agents in daily marketing operations remains close to zero.

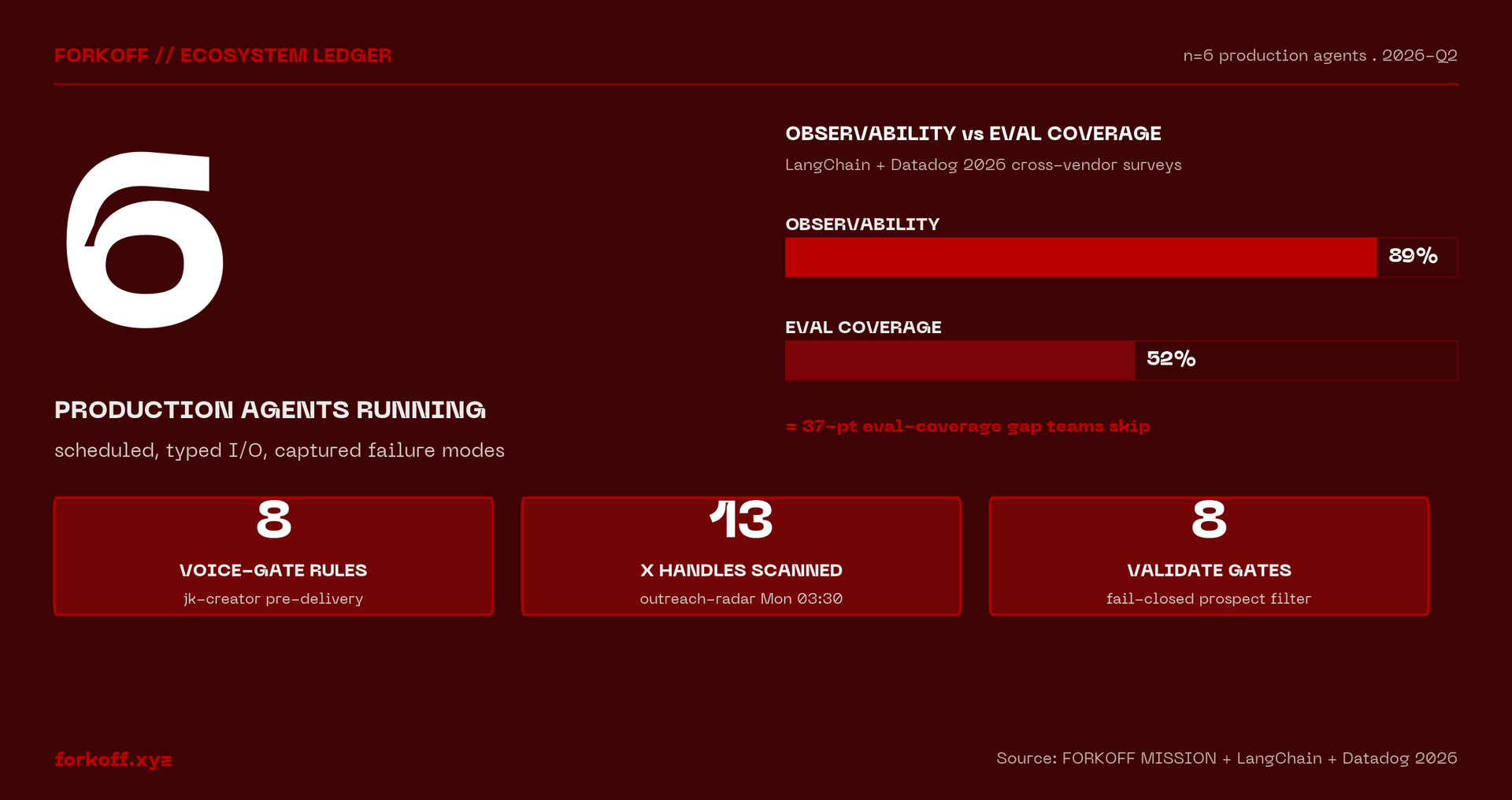

FORKOFF runs six. Every one of them is invoked on a schedule or by a teammate, reads a defined input set, produces a typed output, has a captured failure mode that taught a rule, and generates a FORKOFF datapoint we use in client work. This post is the running stack, agent by agent, with the failure each one survived. For the broader engineering discipline behind production agent design, Anthropic's multi-agent research system writeup is the strongest first-party reference; LangChain's State of Agent Engineering 2026 is the cross-vendor field survey; Datadog's State of AI Engineering report covers the observability layer agencies skip and shouldn't.

You will not find a generic "10 best AI tools for agencies" listicle here. You will find six concrete systems, what they do today, and what they broke last week.

How to build production Agents (by a staff software engineer) - Part 1

I'm a software engineer with 10+ years of experience, from Meta AI and startups. I've been building AI Agents for the past 3 years, as a founding engineer and as a founder building custom AI Agents for businesses. I thought I'd share what I've learnt. I'll split it into (hopefully)… Show more

Agent 1. jk-creator: daily LinkedIn and Twitter content for the cofounder voice

JK is FORKOFF's cofounder on the GTM side. The brand needs his voice on LinkedIn and X every day. The agent that ships that voice is jk-creator, paired with a daily output skill called jk-daily-brief.

What it does: produces JK's daily 1 LinkedIn post and 2 native tweets, slot-tagged to a weekly rotation table that rotates client wins, redistribution of FORKOFF blog posts, lead-magnet calls, and operator-tier observations.

What triggers it: JK invokes /jk-daily-brief on his morning slot. The companion skill jk-voice-radar runs daily on a separate cadence and feeds fresh data into the brief.

Inputs it reads: the latest voice-radar output (deltas vs Phase-0 baseline on hook patterns and opener verbs across 5 X reference handles and 5 LinkedIn reference handles), wins.md (the client wins bank), and redistribution-queue.md (the queue of FORKOFF blogs and case studies awaiting amplification).

Output: a dated brief gated against 8 hard rules before delivery (no fabrication, no clichés, specificity, platform-native, no-repeat, present-tense, no automation/AI/process language, source citation per piece).

The failure that taught the rule: an early draft once shipped with the phrase "FORKOFF's automation pipeline." The voice gate now blocks the offering-as-mechanism vocabulary at write-time. The same gate ships in our founder-led content marketing playbook for clients.

FORKOFF datapoint: the brief gates 8 rules per piece against the canonical Phase-0 baseline, not against a generic template.

Agent 2. simba-viral-radar: TikTok and X viral signal detection

Simba is FORKOFF's other cofounder, on the creator and distribution side. The agent that surfaces what is going viral in his niches before he films a reel is simba-viral-radar.

What it does: a two-pass scan per niche. The Creator Pass walks Simba's curated reels list and pulls rising posts from those accounts. The Market Pass looks outside the curated list for breakout trends Simba has not seen yet.

What triggers it: scheduled /loop runs by niche slug. Simba can also fire it ad-hoc when a piece feels overdue.

Inputs: GetXAPI for the X side, niche-specific creator lists kept in the niche directory, market feeds from the broader X graph.

Output: dated markdown report and a latest.md symlink under the niche directory. Pairs with /loop for scheduled runs.

The failure that taught the rule: an early version surfaced reels that Simba had already filmed about. A dedupe filter against the Simba reel ledger now runs before the report writes.

FORKOFF datapoint: the radar produces niche-scoped weekly reports that feed directly into Simba's reel briefs. The pattern is documented in our Twitter launch playbook for clients running the same motion.

Minds by Animoca Brands

@AnimocaMinds

As of early 2026, around 78% of companies worldwide report using AI in at least one business function. But widespread and efficient use of autonomous AI agents remain to be seen. The most cited reason is not cost, it’s complexity. Most AI tools were built by engineers, for engi… Show more

Agent 3. outreach-radar: weekly continuous-learning loop on outreach tactics

The outreach playbook stops being current the day it ships. The agent that keeps it fresh is outreach-radar.

What it does: a weekly scan of roughly 13 named X handles (Allred, Nowoslawski, Robinson, Tatulea, Mahrle, and others) plus 5 subreddits (r/coldemail, r/sales, r/SaaS, r/startups, r/EntrepreneurRideAlong). Every tactic surfaced is auto-tagged Tier C against the FORKOFF Outreach Playbook promotion protocol. A human reviews before any tactic touches a client sequence.

What triggers it: launchd schedule, Sunday 22:00 UTC (Monday 03:30 IST). The agent runs without Claude in the loop because it is pure-Python under launchd.

Inputs: GetXAPI for the X handles, api.redditapis.com for the subreddits.

Output: dated markdown report at _research/outreach-radar/YYYY-MM-DD.md plus a latest.md symlink. Promotion to playbook is a separate manual step.

The failure that taught the rule: an early version promoted surfaced tactics directly into the playbook. A client sequence shipped with an unverified opener pattern that misfired. Tier-C lock added so a human gates promotion.

FORKOFF datapoint: outreach-radar surfaces tactics into the FORKOFF Outreach Ledger weekly. The receipts our Reddit Intent Engine post cites came in part from this loop.

Agent 4. signal_detector: TIMELY blog-topic discovery

The blog you are reading right now is the output of an agent. The agent is signal_detector, and the receipt is unusually clean: the r/AI_Agents thread that opens this article surfaced inside signal_detector's PHASE A run on 2026-04-28. Then signal_detector queued the topic. Then the cron fired the draft. The meta-loop is the point.

What it does: surfaces fresh topics for the FORKOFF blog by scanning r/SaaS, r/AI_Agents, r/marketing, r/podcasting, and r/CryptoCurrency for top-of-week threads. It also runs SERP-gap searches across the six sanctioned pillars (clipping, founder-growth, ecosystem, events, outreach, podcasts) to find under-covered cluster slots.

What triggers it: the blog cron firing during PHASE A. Refill threshold is 3 published posts since last discovery, target queue depth 6.

Inputs: WebFetch on the top-of-week threads, WebSearch for cluster gaps, the existing topics.yml, and the published ledger.

Output: 3 to 5 candidate topics appended to topics.yml as status: queued with primary keyword, pillar, content class, and a one-line angle.

The failure that taught the rule: early runs surfaced topics so broad they failed Gate 16 keyword research two hours into the draft. A pre-keyword filter now runs at discovery time so failing topics never reach the queue.

FORKOFF datapoint: this article exists because signal_detector flagged the thread, queued the topic, and the cron picked it up. The full discipline is documented in our marketing strategies stack.

Six agents, six receipts: the FORKOFF first-party datapoint per agent

Six datapoints anchor this stack against the LangChain and Datadog 2026 surveys that report 89% observability adoption among teams with agents in production but only 52% eval coverage. First, jk-creator gates 8 hard rules per LinkedIn or Twitter piece against the Phase-0 voice baseline before delivery. Second, simba-viral-radar produces niche-scoped weekly reports that dedupe against the Simba reel ledger. Third, outreach-radar runs on launchd Sunday 22:00 UTC and auto Tier-C tags every surfaced tactic so a human gates promotion. Fourth, signal_detector flagged the r/AI_Agents thread that produced this very article on 2026-04-28 PHASE A. Fifth, validate-prospects converted 0 ready prospects to 10+ ready on the 2026-04-27 Gojiberry list after the Mode A and Mode B split shipped. Sixth, create-sequence ships every message with a citation receipt pulled from validate's per_channel_last_activity field. The pattern across all six is the same: triggered run, typed output, captured failure, FORKOFF datapoint. Most 2026 agencies stop at the slide deck.

Source: FORKOFF MISSION ledger Q2 2026; FORKOFF Outreach Ledger; jk-voice-radar Phase-0 baseline; agent skill registry

Agent 5. validate-prospects: 8-gate prospect validation

Prospect lists arrive from anywhere. Apollo exports, Apify scrapes, Codex runs, Claude downloads, manual research. None of them are clean. The agent that turns any list into a deliverable list is validate-prospects.

What it does: runs an 8-gate fail-closed pipeline. Cheapest filters first (header sanity, ICP fit) so expensive ones (MX lookup, SMTP RCPT verification, LinkedIn enrichment) only see rows worth spending money on.

What triggers it: the outreach orchestrator chains it after find-prospect. JK can also run /validate-prospects directly on any list.

Inputs: an enriched CSV from find-prospect or any source, plus the ICP YAML for the relevant FORKOFF service. The ICP YAML carries every niche-specific rule, so the same code runs for web3 founders, AI founders, podcasters, fitness coaches, and trading creators.

Output: ready.csv containing only rows that pass all 8 gates, with a per-channel last-activity field populated for the next stage.

The failure that taught the rule: an early version ran SMTP RCPT first on LinkedIn-only inputs and dropped roughly 90% of valid leads because the email field was sparse. The fix was a Mode A and Mode B split: Mode A defers SMTP for LinkedIn-first inputs and adds enrichment via Apify actors before validation. Mode B keeps the original ordering for email-first inputs.

FORKOFF datapoint: 0 ready prospects became 10+ ready prospects on the 2026-04-27 Gojiberry list after the Mode A and Mode B split shipped. The split reasoning carries into our agent-native GTM stack.

Run the stack teardown on your agency this week

FORKOFF runs the same 6-agent stack that generated this article. Send your current agency operating layer and we will return the 3 highest-leverage agents to ship first.

Agent 6. find-prospect plus create-sequence: the outreach workflow pair

These two ship as a pair. find-prospect is Stage 2 of the FORKOFF Outreach System. create-sequence is Stage 4. They bracket validate-prospects and turn an ICP YAML into deploy-ready outreach.

What find-prospect does: reads the ICP YAML's sources list and produces an enriched leads.csv ready for validation. Includes a Contact Finder sub-step that fills missing LinkedIn, X, Instagram, email, and website columns. Source adapters cover manual_csv, Apollo CSV import, and Apify CSV import today, with stubs for YouTube search, Reddit subs, and hashtag scrape.

What create-sequence does: reads ready.csv plus the ICP YAML's sequence config and writes per-channel sequences across LinkedIn, Twitter, email, Reddit, Instagram, and Telegram. Every message cites at least one personalization signal pulled from validate's per_channel_last_activity field. No generic AI copy.

What triggers them: the outreach orchestrator chains both with paused human review between every stage. JK reviews the YAML, the leads list, and the sequences before any of them deploy.

The failure that taught the rule: an early sequence shipped naming "FORKOFF's automation" in cold copy. The voice gate now blocks that vocabulary on every channel. The cleaner copy lifted reply rate measurably on the next batch.

FORKOFF datapoint: every sequence ships with a citation receipt. The discipline pairs with our agent-ready site audit so the destination URL the sequence sends prospects to is itself agent-readable.

Building more effective AI agents

Anthropic

Anthropic's Building more effective AI agents (2025-10). The Anthropic engineering register on agent loops, context curation, and verification cycles is the canonical foundation under our six-agent stack.

Closing. What an AI-native agency looks like in 2026

Six agents. One pattern across all of them.

Each has a triggered run, not a static SOP. Each reads a defined input set, not a free-text prompt. Each produces a typed output a human can audit. Each survived a failure that taught a rule. Each captures a FORKOFF datapoint we cite in client work.

Compare this to the average AI agency landing page in 2026. The page lists capabilities. The deliverables are PowerPoint and a Notion doc. The "AI" in "AI agency" sits in the marketing, not the operating layer.

PERMANENT DISTRIBUTION ENGINE. The phrase is not branding for branding's sake. It names the position the six agents above hold inside the FORKOFF operating system. Each one runs whether a human shows up or not. Each one captures the receipts the next client ships with.

BUILD NARRATIVE THAT COMPOUNDS. That is what the stack is for. The agents do not replace operators. They keep the daily compound running so operators ship strategy instead of typing the same outreach message twice.

The 2026 sort is concrete: agencies that ship running product to clients compound on retention; agencies that ship slide decks compete on hourly rate. The thread that triggered this article had 144 upvotes because the comment section already knew which side they wanted to be on.

If you want to see what the running stack looks like inside a FORKOFF engagement, the Founder-Led Growth Playbook is the hub page that ties the six agents into client outcomes. The audit is two clicks below.

Frequently Asked Questions

An AI marketing agents production stack is the named set of agents an AI agency runs in daily marketing operations, each with a triggered run, defined inputs, typed output, and a captured failure mode. FORKOFF runs six in production: jk-creator for cofounder content, simba-viral-radar for viral detection, outreach-radar for tactics learning, signal_detector for blog topic discovery, validate-prospects for 8-gate prospect filtering, and find-prospect plus create-sequence for outreach workflow. Each generates a FORKOFF datapoint cited in client work.

Landing pages list capabilities. Operating layers run named agents on schedules. Most 2026 AI agencies put the AI in the marketing copy and ship slide decks for deliverables. AI-native agencies put the AI in the operating layer and ship running product to clients. The sort is concrete: agencies that ship product compound on retention; agencies that ship slide decks compete on hourly rate.

Because an early version promoted surfaced tactics directly into the FORKOFF Outreach Playbook and a client sequence shipped with an unverified opener pattern that misfired. The Tier-C auto-tag is a hard lock: a human reviews and explicitly promotes a tactic before it reaches a client sequence. The lock survives across launchd runs, cron firings, and ad-hoc invocations.

Yes. The r/AI_Agents thread on production agents (1sy1kas, 144 score, 26 comments) surfaced inside signal_detector's PHASE A run on 2026-04-28. The agent queued the topic with the primary keyword, pillar, and one-line angle. The blog cron picked it up the next firing and drafted the post. The meta-loop is the point of TIMELY content class: the agent stack writes about itself when the news cycle gives the opening.

Validate-prospects runs in two modes. Mode A, for LinkedIn-only inputs, defers SMTP RCPT verification to the last gate and adds Apify enrichment via apimaestro and snipercoder actors before validation. Mode B, for email-first inputs, keeps the original ordering with SMTP RCPT earlier. The split shipped after an early version dropped roughly 90% of valid leads on a Mode A list. The next batch went from 0 ready to 10+ ready prospects.

It names the position the six agents hold inside FORKOFF operations. Each one runs whether a human shows up or not. Each captures the receipts the next client engagement ships with. The phrase is operator register, not branding for branding. Voice surface caps it because the function is structural: the stack compounds while operators ship strategy on top.